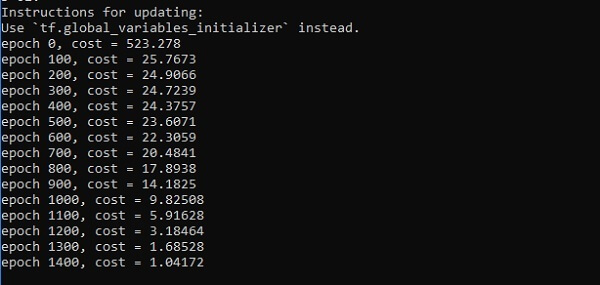

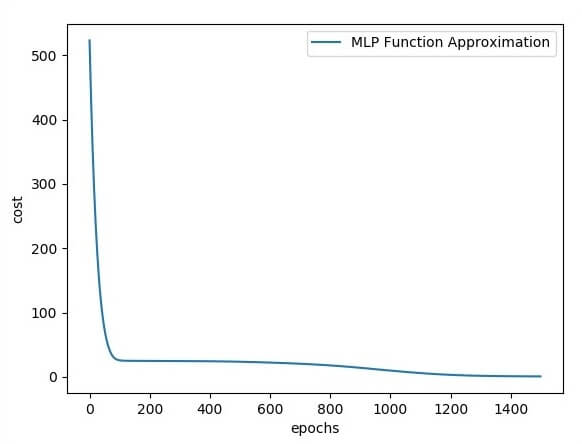

Hidden Layer Perceptron in TensorFlowA hidden layer is an artificial neural network that is a layer in between input layers and output layers. Where the artificial neurons take in a set of weighted inputs and produce an output through an activation function. It is a part of nearly and neural in which engineers simulate the types of activity that go on in the human brain. The hidden neural network is set up in some techniques. In many cases, weighted inputs are randomly assigned. On the other hand, they are fine-tuned and calibrated through a process called backpropagation. The artificial neuron in the hidden layer of perceptron works as a biological neuron in the brain- it takes in its probabilistic input signals, and works on them. And it converts them into an output corresponding to the biological neuron's axon. Layers after the input layer are called hidden because they are directly resolved to the input. The simplest network structure is to have a single neuron in the hidden layer that directly outputs the value. Deep learning can refer to having many hidden layers in our neural network. They are deep because they will have been unimaginably slow to train historically, but may take seconds or minutes to prepare using modern techniques and hardware. A single hidden layer will build a simple network. The code for the hidden layers of the perceptron is shown below: Output Following is the illustration of function layer approximation-

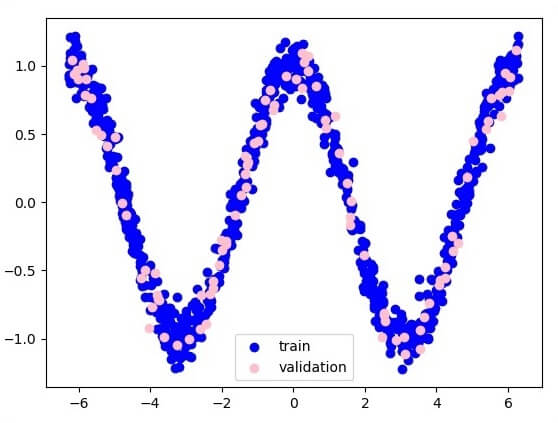

Here two data are represented in the shape of W. The two data are: train and validation, which are described in distinct colors as visible in the legend section.

Next TopicMulti-layer Perceptron

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share