Difference between Model Parameter and HyperparameterFor a Machine learning beginner, there can be so many terms that could seem confusing, and it is important to clear this confusion to be proficient in this field. For example, "Model Parameters" and "Hyperparameters". Not having a clear understanding of both terms is a common struggle for beginners. So, in order to clear this confusion, let's understand the difference between parameter and hyperparameter and how they can be related to each other.

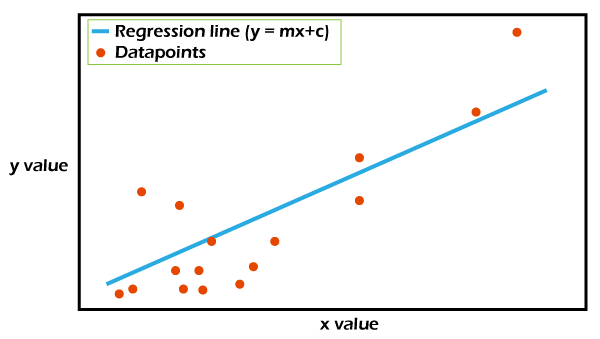

What is a Model Parameter?Model parameters are configuration variables that are internal to the model, and a model learns them on its own. For example, W Weights or Coefficients of independent variables in the Linear regression model. Weights or Coefficients of independent variables SVM, weight, and biases of a neural network, cluster centroid in clustering. We can understand model parameters using the below image:

The above plot shows the model representation of Simple Linear Regression. Here, x is an independent variable, y is the dependent variable, and the goal is to fit the best regression line for the given data to define a relationship between x and y. The regression line can be given by the equation: Where m is the slope of the line, and c is the intercept of the line. These two parameters are calculated by fitting the line by minimizing RMSE, and these are known as model parameters. ome key points for model parameters are as follows:

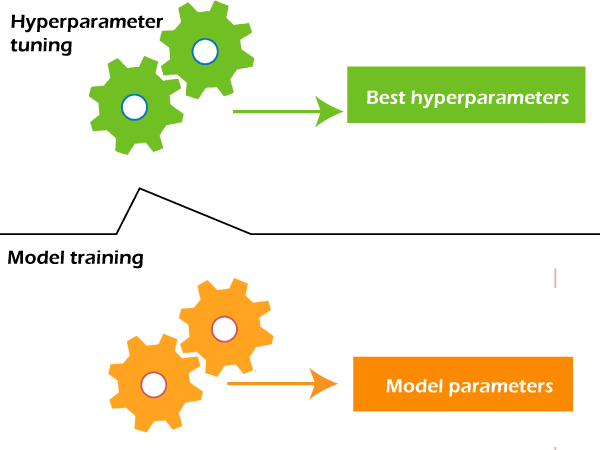

What is Model Hyperparameter?Hyperparameters are those parameters that are explicitly defined by the user to control the learning process.

Some examples of Hyperparameters are the learning rate for training a neural network, K in the KNN algorithm, etc. Comparison table between Parameters and Hyperparameters

ConclusionIn this article, we have understood the clear definitions of Model Parameters are Hyperparameters and the difference between both of them. In brief, Model parameters are internal to the model and estimated from data automatically, whereas Hyperparameters are set manually and are used in the optimization of the model and help in estimating the model parameters.

Next TopicHyperparameters in Machine Learning

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share