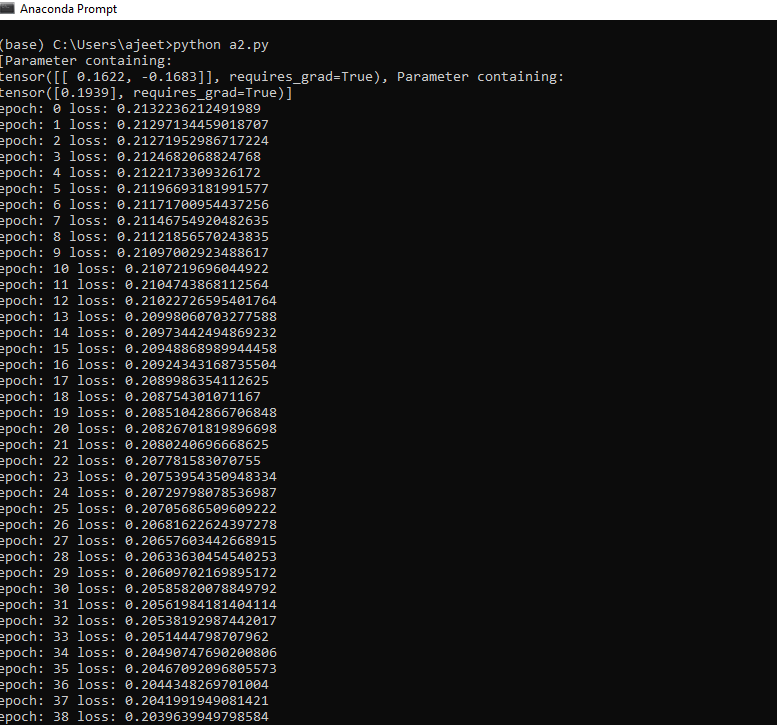

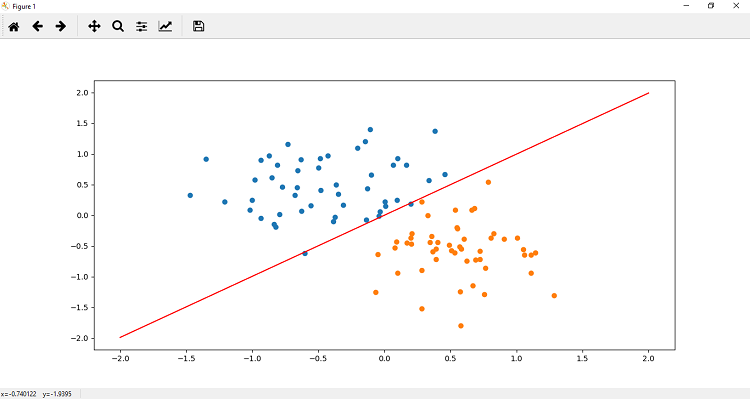

Training of Perceptron ModelTraining of the perceptron model is similar to the linear regression model. We initialize our neural model, which have two input node in the input layer and a single output node with a sigmoid activation function. When we plot our model to the data, we found that it does not fit our data well. We need to train this model so that the model has the optimal weight and bias parameters and fit this data. There are the following steps to train a model: Step 1 In the first step, the criterion by which we will compute the error of our model is recall Cross entropy. Our loss function will be measured on the basis of binary cross entropy loss (BCELoss) because we are dealing with only two classes. It is import from nn module. Step 2 Now, our next step is to update parameters using optimizer. So we define the optimizer which is used gradient descent algorithm (stochastic gradient descent). Step 3 Now, we will train our model for a specified no of epochs as we have done in linear model. So the code will be similar to linear model as Now, at last we plot our new linear model by simply calling plotfit() method.

Next TopicTesting of Perceptron Model

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share