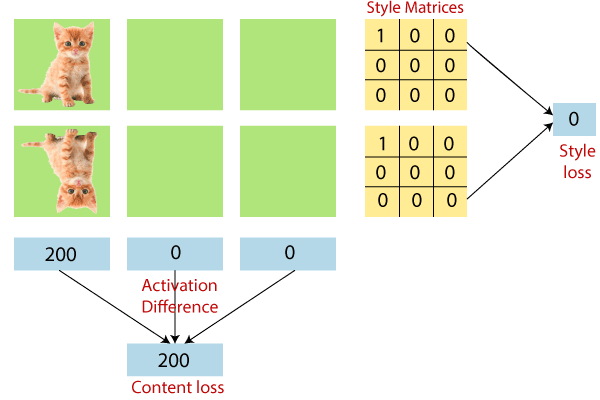

Gram MatrixThe Gram Matrix arises from a function in a finite-dimensional space; the Gram matrix entries are then the inner products of the essential services of the finite-dimensional subspace. We have to compute the style loss. But we haven't been shown "why the style loss is computed using the Gram matrix." The Gram matrix captures the "distribution of features" of a set of feature maps in a given layer. Note: We don't think the above question has been answered satisfactorily. For example, let us take a shot explaining it more intuitively. Suppose we have the following feature map. For simplicity, we consider only three feature maps, and two of them are entirely passive. We have a feature map set where the first feature map looks like a nature picture, and in the second feature map, the 1st feature map looks like a dark cloud. Then if we try to calculate the content and style loss manually, we will get these values.This means that we have not lost style information between the two feature map sets. However, the content is different.

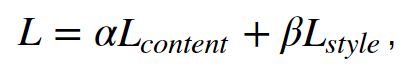

Understanding the style loss Final lossIt is defined as,

Where α and β are user-defined hyperparameters. Here β has absorbed the M^l normalization factor defined earlier. By controlling α and β, we can control the amount of content and style injected into the generated image. We can also see a beautiful visualization of the different effects of different α and β values in the paper. Defining the optimizerNext, we use Adam optimizer to optimize the loss of the network. Defining the input pipelineHere we describe the full input pipeline. tf.data provided a very easy to use and intuitive interface to implement the input pipelines. For most of the image manipulation tasks, we use the tf. Image api, still, the ability of tf.image to dynamically sized images is minimal. For example, if we want to crop and resize images dynamically, it is better to do in the form of the generator, as implemented below. We have defined two input pipelines; one for style and one for content. The content input pipeline looks for only jpg images that start with a word content_, where the style pipeline looks for models beginning with style_. Defining the computational graphWe will be representing the full computational graph.

Next TopicProcess of Style Transferring

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share