Unicodes for Operators in JavaBinary representation is the internal data storage format used by computers. 0s and 1s are used in conjunction to store characters. The action is referred to as encoding. Because it makes it easier to express the same information on several types of devices, character encoding schemes are crucial. Categories of CodingVarious encoding styles utilized prior to the Unicode system are listed below. For example, KOI-8 is used for Russian, GB18030 and BIG-5 are used for Chinese, and ASCII (American Standard Code for Information Interchange) is used for the United States. ISO 8859-1 is used for Western European languages. Base64 is used for text to binary conversion. Java uses the Unicode System because...

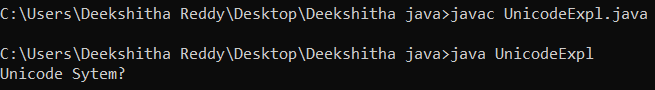

There are numerous character sets in some languages, and the lengths of the codes used to represent each character might vary. For instance, while some characters only need a single byte to encode, others may need two or more. Due to these issues, the Unicode System was developed as a more effective character encoding method. What is Unicode System?The Unicode system is a global character encoding method that can represent the majority of world languages. The Unicode Consortium created the Unicode System. Unicode characters are represented by hexadecimal values. Numerous Unicode Transformation Formats exist: UTF-8: It stands for character encoding that is 8 bits (1 byte) long. UTF-16: This is a 16-bit, two-byte character encoding. UTF-32: This is a character encoding that is 32 bits (4 bytes) long. The format to access a Unicode character begins with the escape letter "u" and is followed by a four-digit hexadecimal value. The potential values for a Unicode character range from u0000 to uFFFF. Some of the Unicode symbols include the copyright symbol (u00A9), the capital Greek letter delta (u0394), and the double quote (u0022). UnicodeExpl.java Output:

A Class UnicodeDemo was created in the code above. Using the getBytes() method, a Unicode String named str1 is first transformed into a UTF-8 format. The value of newstr would then be displayed on the terminal after the byte array has once more been converted to Unicode. Issues Related to UnicodeThe 16-bit character encoding was intended to be represented by the Unicode standard. It was intended to be able to represent every character known to man using the basic data type char. However, the 16-bit encoding could only represent 65,536 characters, which was insufficient to represent all the characters that were available on the globe. As a result, the Unicode character set was increased to 1,112,064 characters. Java uses a pair of char values to define Supplementary characters, which are characters that are greater than 16 bits. In this article, we've covered fundamental encoding techniques, the Java Unicode System, issues the system causes, and a Java program that uses the system to demonstrate its use.

Next TopicXOR and XNOR operators in Java

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share