What is Data Center in Cloud Computing?What is a Data Center?A data center - also known as a data center or data center - is a facility made up of networked computers, storage systems, and computing infrastructure that businesses and other organizations use to organize, process, store large amounts of data. And to broadcast. A business typically relies heavily on applications, services, and data within a data center, making it a focal point and critical asset for everyday operations. Enterprise data centers increasingly incorporate cloud computing resources and facilities to secure and protect in-house, onsite resources. As enterprises increasingly turn to cloud computing, the boundaries between cloud providers' data centers and enterprise data centers become less clear. How do Data Centers work?A data center facility enables an organization to assemble its resources and infrastructure for data processing, storage, and communication, including:

Gathering all these resources in one data center enables the organization to:

Why are data centers important?Data centers support almost all enterprise computing, storage, and business applications. To the extent that the business of a modern enterprise runs on computers, the data center is business. Data centers enable organizations to concentrate their processing power, which in turn enables the organization to focus its attention on:

What are the main components of Data Centers?Elements of a data center are generally divided into three categories:

A modern data center concentrates an organization's data systems in a well-protected physical infrastructure, which includes:

Datacenter Resources typically include:

It demands a physical facility with physical security access controls and sufficient square footage to hold the entire collection of infrastructure and equipment. How are Datacenters managed?Datacenter management is required to administer many different topics related to the data center, including:

The image shows an IT professional installing and maintaining a high-capacity rack-mounted system in a data center. Datacenter Infrastructure Management and MonitoringModern data centers make extensive use of monitoring and management software. Software, including DCIM tools, allows remote IT data center administrators to monitor facility and equipment, measure performance, detect failures and implement a wide range of corrective actions without ever physically entering the data center room. The development of virtualization has added another important dimension to data center infrastructure management. Virtualization now supports the abstraction of servers, networks, and storage, allowing each computing resource to be organized into pools regardless of their physical location. Action Network, storage and server virtualization can be implemented through software, giving software-defined data centers traction. Administrators can then provision workloads, storage instances, and even network configurations from those common resource pools. When administrators no longer need those resources, they can return them to the pool for reuse. Energy Consumption and EfficiencyDatacenter designs also recognize the importance of energy efficiency. A simple data center may require only a few kilowatts of energy, but enterprise data centers may require more than 100 megawatts. Today, green data centers with minimal environmental impact through low-emission building materials, catalytic converters, and alternative energy technologies are growing in popularity. Data centers can maximize efficiency through physical layouts, known as hot aisle and cold isle layouts. The server racks are lined up in alternating rows, with cold air intakes on one side and hot air exhausts. The result is alternating hot and cold aisles, with the exhaust forming a hot aisle and the intake forming a cold aisle. Exhausts are pointing to air conditioning equipment. The equipment is often placed between the server cabinets in the row or aisle and distributes the cold air back into the cold aisle. This configuration of air conditioning equipment is known as in-row cooling. Organizations often measure data center energy efficiency through power usage effectiveness (PUE), which represents the ratio of the total power entering the data center divided by the power used by IT equipment. However, the subsequent rise of virtualization has allowed for more productive use of IT equipment, resulting in much higher efficiency, lower energy usage, and reduced energy costs. Metrics such as PUE are no longer central to energy efficiency goals. However, organizations can still assess PUE and use comprehensive power and cooling analysis to understand better and manage energy efficiency. Datacenter LevelData centers are not defined by their physical size or style. Small businesses can operate successfully with multiple servers and storage arrays networked within a closet or small room. At the same time, major computing organizations -- such as Facebook, Amazon, or Google -- can fill a vast warehouse space with data center equipment and infrastructure. In other cases, data centers may be assembled into mobile installations, such as shipping containers, also known as data centers in a box, that can be moved and deployed. However, data centers can be defined by different levels of reliability or flexibility, sometimes referred to as data center tiers. In 2005, the American National Standards Institute (ANSI) and the Telecommunications Industry Association (TIA) published the standard ANSI/TIA-942, "Telecommunications Infrastructure Standards for Data Centers", which defined four levels of data center design and implementation guidelines. Each subsequent level aims to provide greater flexibility, security, and reliability than the previous level. For example, a Tier I data center is little more than a server room, while a Tier IV data center provides redundant subsystems and higher security. Levels can be differentiated by available resources, data center capabilities, or uptime guarantees. The Uptime Institute defines data center levels as:

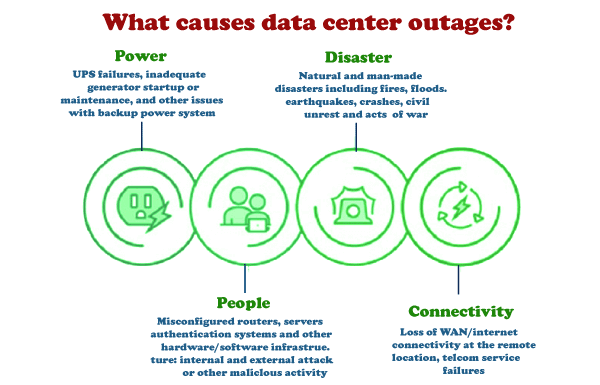

Most data center outages can be attributed to these four general categories. Datacenter Architecture and DesignAlthough almost any suitable location can serve as a data center, a data center's deliberate design and implementation require careful consideration. Beyond the basic issues of cost and taxes, sites are selected based on several criteria: geographic location, seismic and meteorological stability, access to roads and airports, availability of energy and telecommunications, and even the prevailing political environment. Once the site is secured, the data center architecture can be designed to focus on the structure and layout of mechanical and electrical infrastructure and IT equipment. These issues are guided by the availability and efficiency goals of the desired data center tier. Datacenter SecurityDatacenter designs must also implement sound security and security practices. For example, security is often reflected in the layout of doors and access corridors, which must accommodate the movement of large, cumbersome IT equipment and allow employees to access and repair infrastructure. Fire fighting is another major safety area, and the widespread use of sensitive, high-energy electrical and electronic equipment precludes common sprinklers. Instead, data centers often use environmentally friendly chemical fire suppression systems, which effectively oxygenate fires while minimizing collateral damage to equipment. Comprehensive security measures and access controls are needed as the data center is also a core business asset. These may include:

These security measures can help detect and prevent employee, contractor, and intruder misconduct. What is Data Center Consolidation?There is no need for a single data center. Modern businesses can use two or more data center installations in multiple locations for greater flexibility and better application performance, reducing latency by locating workloads closer to users. Conversely, a business with multiple data centers may choose to consolidate data centers while reducing the number of locations to reduce the cost of IT operations. Consolidation typically occurs during mergers and acquisitions when most businesses no longer need data centers owned by the subordinate business. What is Data Center Colocation?Datacenter operators may also pay a fee to rent server space in a colocation facility. A colocation is an attractive option for organizations that want to avoid the large capital expenditure associated with building and maintaining their data centers. Today, colocation providers are expanding their offerings to include managed services such as interconnectivity, allowing customers to connect to the public cloud. Because many service providers today offer managed services and their colocation features, the definition of managed services becomes hazy, as all vendors market the term slightly differently. The important distinction to make is:

What is the difference between Data Center vs. Cloud?Cloud computing vendors offer similar features to enterprise data centers. The biggest difference between a cloud data center and a typical enterprise data center is scale. Because cloud data centers serve many different organizations, they can become very large. And cloud computing vendors offer these services through their data centers.

Large enterprises such as Google may require very large data centers, such as the Google data center in Douglas County, Ga. Because enterprise data centers increasingly implement private cloud software, they increasingly see end-users, like the services provided by commercial cloud providers. Private cloud software builds on virtualization to connect cloud-like services, including:

The goal is to allow individual users to provide on-demand workloads and other computing resources without IT administrative intervention. Further blurring the lines between the enterprise data center and cloud computing is the development of hybrid cloud environments. As enterprises increasingly rely on public cloud providers, they must incorporate connectivity between their data centers and cloud providers. For example, platforms such as Microsoft Azure emphasize hybrid use of local data centers with Azure or other public cloud resources. The result is not the elimination of data centers but the creation of a dynamic environment that allows organizations to run workloads locally or in the cloud or move those instances to or from the cloud as desired. Evolution of Data CentersThe origins of the first data centers can be traced back to the 1940s and the existence of early computer systems such as the Electronic Numerical Integrator and Computer (ENIAC). These early machines were complicated to maintain and operate and had cables connecting all the necessary components. They were also in use by the military - meaning special computer rooms with racks, cable trays, cooling mechanisms, and access restrictions were necessary to accommodate all equipment and implement appropriate safety measures. However, it was not until the 1990s, when IT operations began to gain complexity and cheap networking equipment became available, that the term data center first came into use. It became possible to store all the necessary servers in one room within the company. These specialized computer rooms gained traction, dubbed data centers within organizations. At the time of the dot-com bubble in the late 1990s, the need for Internet speed and a constant Internet presence for companies required large amounts of networking equipment required large facilities. At this point, data centers became popular and began to look similar to those described above. In the history of computing, as computers get smaller and networks get bigger, the data center has evolved and shifted to accommodate the necessary technology of the day. Difference between Cloud and Data CenterMost organizations rely heavily on data for their respective day-to-day operations, irrespective of the industry or the nature of the data. This data can range from making business decisions, identifying patterns to improving the services provided, or analyzing weak links in a workflow. CloudCloud may be a term used to describe a group of services, either a global or individual network of servers, that have a unique function. Cloud is not a physical entity, but they are a group or network of remote servers arched together to operate as a single unit for an assigned task. In short, a cloud is a building containing many computer systems. We access the cloud through the Internet because cloud providers provide the cloud as a service. One of the many confusions we have is whether the cloud is the same as cloud computing? The answer is no. Cloud services like Compute run in the cloud. The computing service offered by the cloud lets users' rent' computer systems in a data center over the Internet. Another example of a cloud service is storage. AWS says, "Cloud computing is the on-demand delivery of IT resources over the Internet with pay-as-you-go pricing. Instead of buying, owning, and maintaining physical data centers and servers, you can access technology services, such as computing power, storage, and databases, from a cloud provider such as Amazon Web Services (AWS)." Types of Cloud: Businesses use cloud resources in different ways. There are mainly four of them:

Data CenterA data center can be described as a facility/location of networked computers and associated components (such as telecommunications and storage) that help businesses and organizations handle large amounts of data. These data centers allow data to be organized, processed, stored, and transmitted across applications used by businesses. Types of Data Center: Businesses use different types of data centers, including:

Difference between Cloud and Data Center:

Next TopicResiliency in Cloud Computing

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share