Containerized Applications

Application containerization can be defined as a method that is OS-level virtualization. It is used for running and deploying shared applications without publishing an entire VM (Virtual Machine) for all apps. More than one isolated services and applications execute on an individual host and use a similar OS kernel.

Various containers work over virtual machines, cloud instances, and bare-metal systems, across Mac OSes, Linux, and select windows.

It is a developing technology, i.e., modifying the format developers run, and test that instance of an application within the cloud.

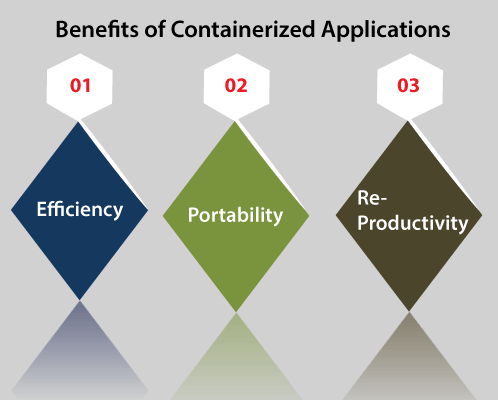

Benefits of Containerized Applications

Some of the key benefits of Containerized Applications are discussed below:

- Efficiency: Containerized application's supporters focus on efficiency for storage, CPU, and memory compared to physical application hosting and traditional virtualization. It is quite possible for supporting various other application containers inside a similar infrastructure without any overhead required via virtual machines.

- Portability: Containerized applications have another feature, i.e., portability. Various application containers may execute on a cloud and system without requiring any code modifications when OS is similar across systems.

- Re-productivity: It is another benefit for achieving application containerization. It is one of the reasons that fit the adoption of the container in the DevOps methodology. The binaries, file systems, and other data remain the same throughout the lifecycle of an application through code create from production and test. Each artifact of the development becomes a single image. The configuration management on the system level can be replaced via the version control on the image level.

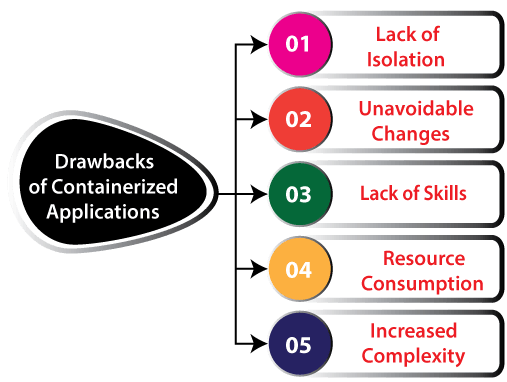

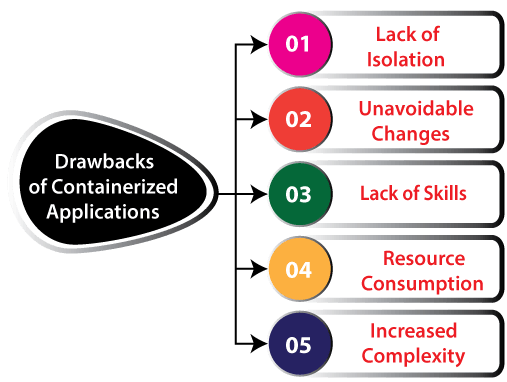

Drawbacks of Containerized Applications

A few essential drawbacks of containerized application are listed and discussed below:

- Lack of isolation: One potential disadvantage of the containerization process is the lack of isolation through the OS core. It is because the applications containers can't be abstracted from any host OS on the virtual machine. Security monitoring and scanning tools can secure the OS and hypervisor, but not the application containers. Although, the containerized application also facilitates some improvements for security. It is because of the enhanced isolation of smaller-footprint is more specialized in OSes and application packages that execute them. Policies dictate authority levels to make secure deployments for containers.

- Unavoidable changes: Containerized application is a new and consistently developing IT methodology of an enterprise-level. As an outcome, instability and change are unavoidable. It can be negative and positive because improvements of the technology can define bugs and enhance stability within the container technology.

- Lack of skills: Generally, there is an inadequacy of skill and education among IT employees. There are fewer executives who know containers well as compared to the field of server virtualization.

- Resource consumption and increased complexity: Also, OS lock-in can pose an issue, but already developers write applications for running on particular operating systems. When an enterprise requires executing any containerized Windows application over a Linux server, a nested VMs or compatibility layer could solve the issue. However, it would increase resource consumption and complexity.

How Does Containerized Application Works?

Application containers contain some runtime components like libraries, environment variables, and files. These components are necessary for running the desired software. The application containers use fewer resources if compared to deployment on VMs because containers distribute the resources without any full OS to underpin all apps. The complete information set is an image for executing within the container. The engine of the container expands these images over hosts.

The most common application containerization method is Docker, an open-source container and Docker engine that is based on runC universal runtime. Docker Swarm is the scheduling and clustering tool. IT developers and administrators can manage and establish any cluster of the Docker nodes as an individual virtual system. The primary competitive contribution is rkt container engine of CoreOS. It depends on the appc (App Container) spec as a standard and open format of the container. However, it can also run images of a Docker container. There is an involvement of app containerization dealer lock-in through ecosystem partners and users. However, it is tempered by a large number of open-source automation underpinning container commodities.

Application containerization functions with distributed applications and micro-services. All the containers run independently to each other and also utilize fewer resources through the host. All micro-services interact with others via the interface of application programming.

The layer of container virtualization is able for scaling up micro-services to match growing demands for various components of the application and load distribution. The developers can define the physical resources set as disposable VMs with virtualization. Also, this setup encourages flexibility. For example, when the developers want the variation through a standard image, then they can make any container that holds the newly created library within the virtualized environment.

The developers make modifications to the code within a container image for updating an application. Then, the developers redeploy the image for running over the host OS.

App Containerization Technology Types

There are some other technologies of containerized application in the extension of Docker. These technologies are as follows:

- Apache Mesos: It is an open-source manager of a cluster. It manages workloads within any distributed environment from dynamic resource isolation and sharing. Apache Memos is best suited for the management and deployment of the applications within the clustered environment (large-scale).

- Google Kubernetes Engine: It can be defined as a production-ready and managed environment to deploy containerized applications. This technology allows rapid app iteration and development by creating it easy for managing, updating, and deploying services and applications.

- ECR (Amazon Elastic Container Registry): It is a product of Amazon Web Services that deploys, manages, and stores the Docker images. These services are some managed clusters of the instances of Amazon EC2. Amazon Elastic Container Registry hosts the images within a highly scalable and available architecture. It can enable developers for deploying containers for the applications dependably.

- AKS (Azure Kubernetes Services): It is a driven container composition service based on an open-source Kubernetes system. We can access Azure Kubernetes Services from the Microsoft Azure public cloud. Many developers can use these services to manage, scale, and deploy container-based applications and Docker containers across the container host's cluster.

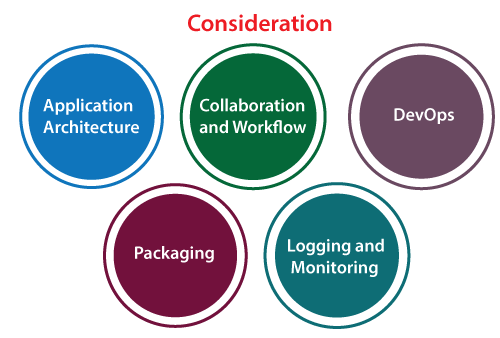

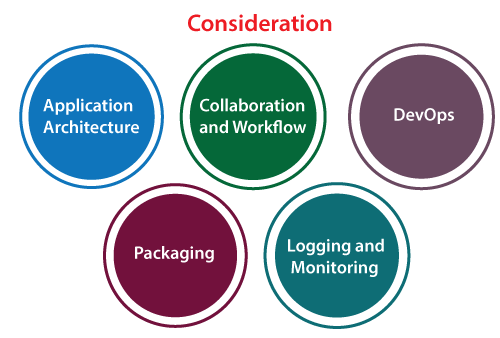

Considerations when selecting Containerization platform

When choosing any platform for the process of containerization developers, we need to consider the following aspects. Some of the essential aspects are as follows:

- Application architecture: In this, developers focus on the decisions of application architecture they require to make, like the application micro-services or monolithic, which are stateful or stateless.

- Collaboration and workflow: Developers consider the modifications to various workflows. Also, it considers that the platform enables them to collaborate with several other stakeholders.

- DevOps: Developers consider many requirements to use a self-service interface for deploying the apps applying DevOps pipeline.

- Packaging: In this, developers consider the tools and format for using the code of an application, containers, and dependencies.

- Logging and monitoring: In this type of consideration, developers make sure that the available logging and monitoring options work well and fulfill their requirements with their workflows of development.

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now