Talend Data integration Job Designing

In this section, we are ready to create our first Job in Talend studio.

The runnable layer of a business Model is Job designing. When one or more components are connecting, it represents the graphical design, which allows us to set up and run the dataflow management process.

Job design helps to convert the business needs into the code, routines, and programs, basically it is used to implement our data flow.

The Job we design can relate all of the various sources and target which we need, for data integration and any other related process.

While designing a Job, we can perform many actions like:

- We can set the connection and relationship between components to define the sequence and the nature of actions.

- We can change the default setting of components as well as create new components which match our exact need.

- For editing the components, we can access the code at any time.

- We can design and add the items to the repository for reusing and sharing a purpose.

Note:

We need to install an Oracle JVM 1.8 (IBM JVM is not supported, to execute our Jobs.

Refer the below link to download an Oracle JVM:

https://www.oracle.com/technetwork/java/javase/downloads/index.html

Follow the below process to design a Job in Talend studio for a data integration platform:

- Create a new Job

- Adding the components

- Connect the components

- Configure the components

- Execute the Job

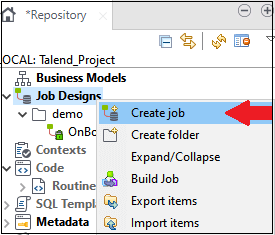

Create a new Job

Step1:

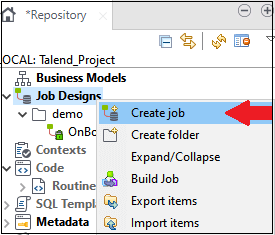

- Open the Talend Open Studio for Data integration platform.

- Go to the repository pane, right-click on the Job design, and select the Create Job as we can see in the below screenshot:

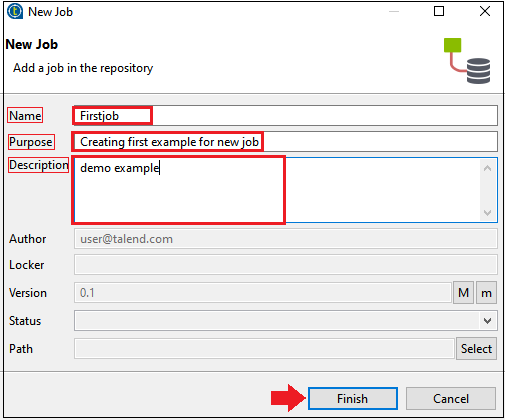

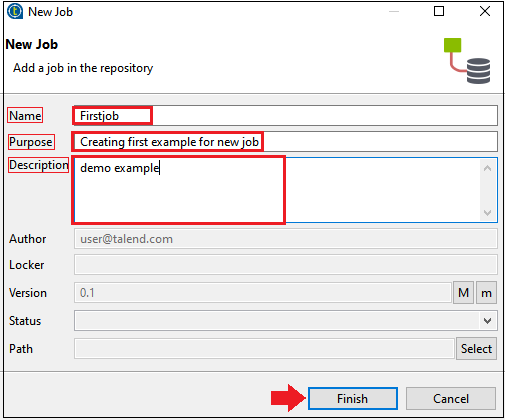

Step2:

- The new Job window will open, where we will fill the details like a Name, Purpose and the Description, and click on the Finish button as we can see in the below screenshot:

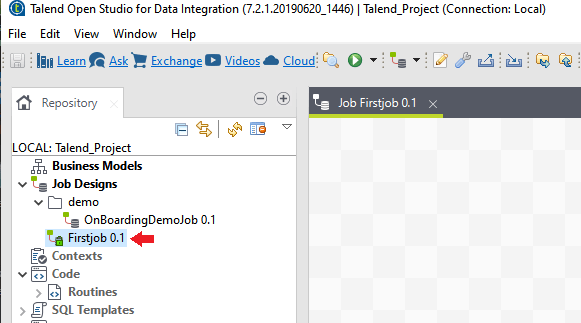

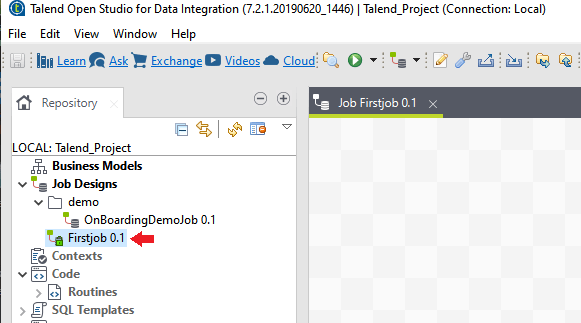

- The Job has been created under the Job Designs section, as we can see in the below image,

Adding the Components:

The next phase of Job designing is adding the components, where we will add the components, connect, and configure them.

Step3:

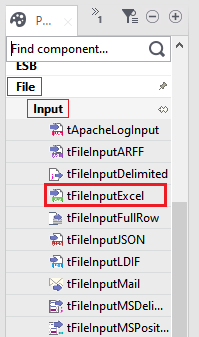

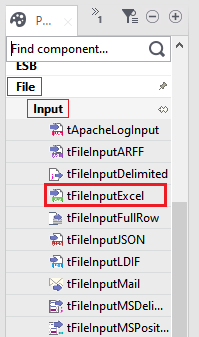

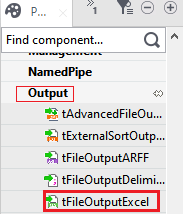

For adding the components of a Job, we will directly go to the Palette panel, where we have several numbers of components available.

Or, we can also use the search field and enter the name of the component and select it.

For example, we will select tFileInputExcel in the Input from the File component.

Palette → File → Input → tFileInputExcel

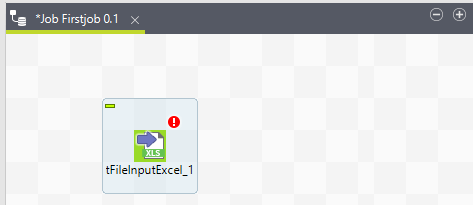

Step4:

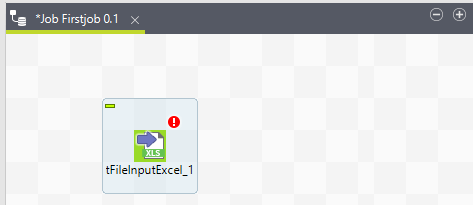

- Since we are taking the Excel file as an input, we will drag the tFileInputExcel component from the Palette panel, and drop it to Design workspace window, as we can see in the below image:

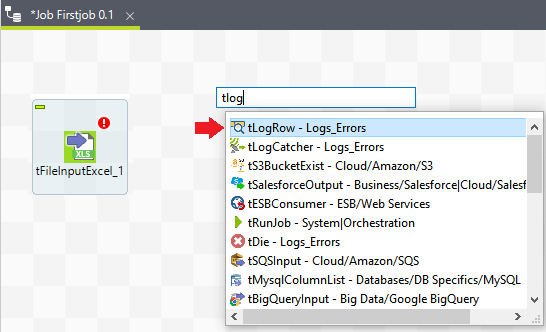

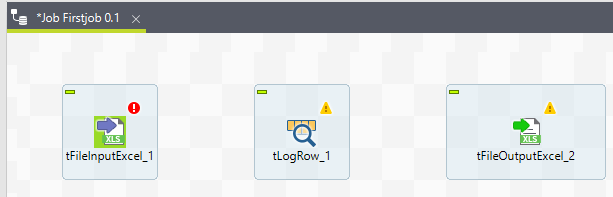

Step5:

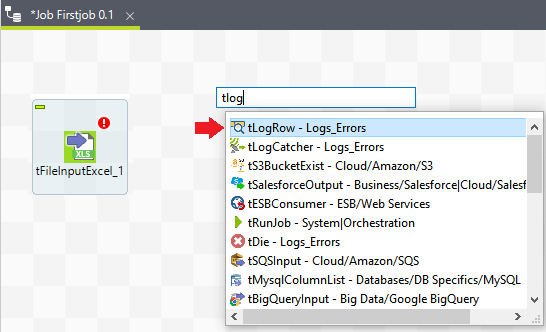

- Now in the next step, we will click anywhere on the design workspace window.

- One search box will appear, then type tLogRow and select it from the given list, and the selected component will be shown on the design workspace window as we can observe in the below image:

Note:

tLogRow is used to display the flow content (rows) on the running Job console.

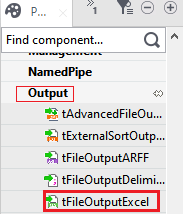

Step6:

- In the next step, we will finally drag the tFileOutputExcel component from the Palette pane, and drop into the design workspace window, as we can observe in the below screenshot:

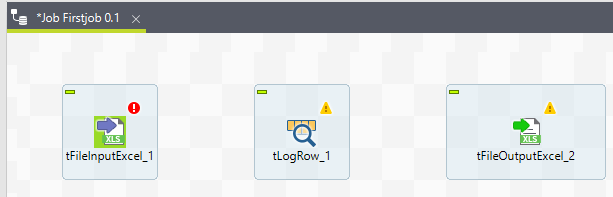

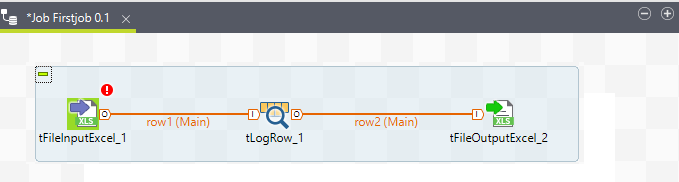

As of now, we are done with adding the components of the Job, and our design workspace will look like this:

Connect the components

After successfully adding the components, we are going to connect the components.

For connecting the components, follow the below process:

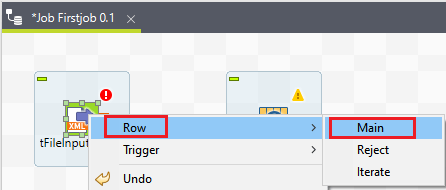

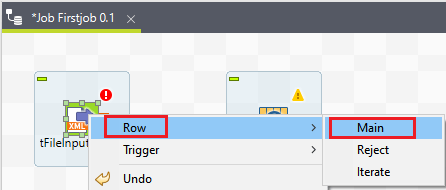

Step7:

- Right-click on the first component which is tFileInputExcel, and connects them using row connection, which is as shown below,

Row → Main

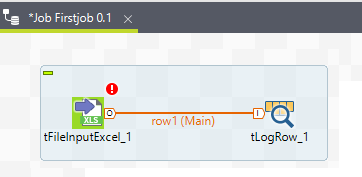

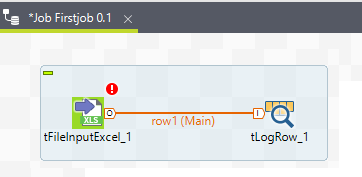

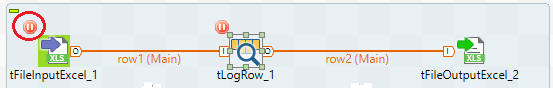

- row1 (main) connection is made, as we can see in the below screenshot:

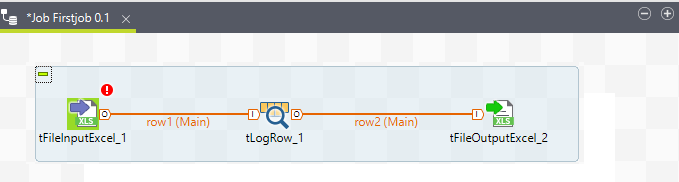

Step8:

- Then, right-click on tLogRow, and draw a mainline with the help of row connection to the tFileOutputExcel component as we can see in the below image:

Till now, we have successfully connected the components of Jobs.

Configure the components

After adding and connecting the components, we will move towards our next phase, which is to configure the components.

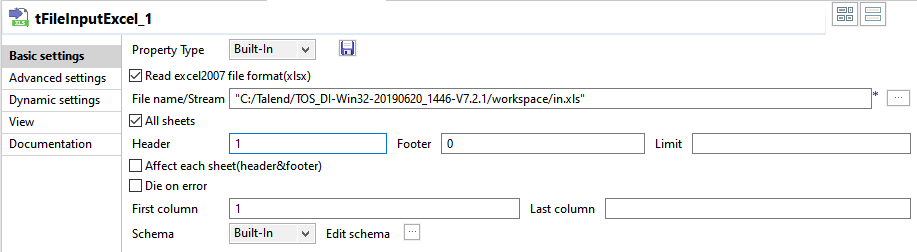

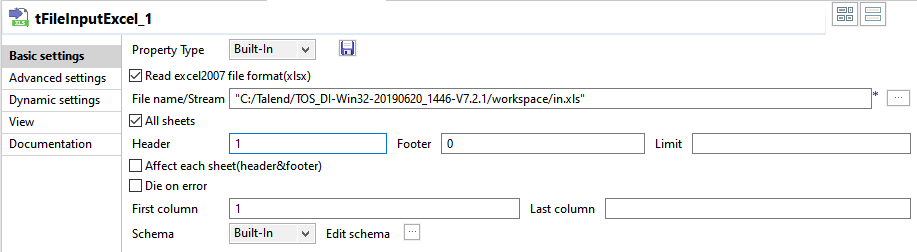

Step9:

- To configure the components, double click on the first component that is tFileInputExcel, and give the path of our input file in the File name/stream, and if the first row in the Excel file has a column name, then put one in the Header column which is as shown below:

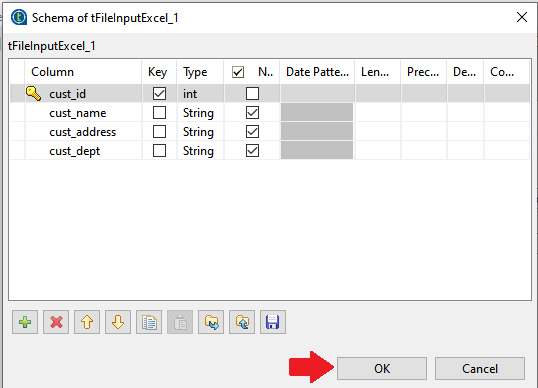

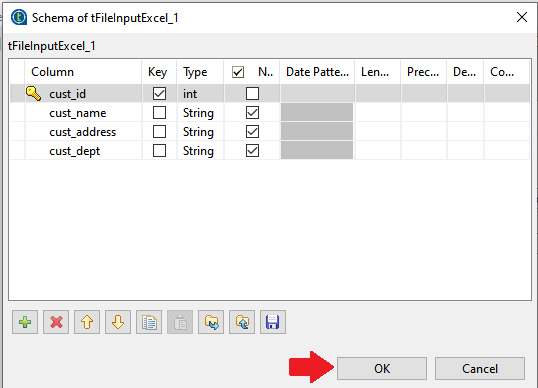

Step10:

- After that, click on the Edit schema, where we can add the columns, and its type according to our input Excel file.

- After adding the schema, click on the Ok button as we can observe in the below screenshot:

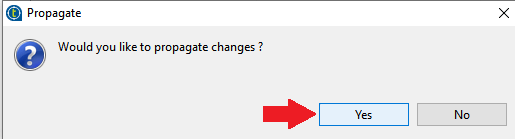

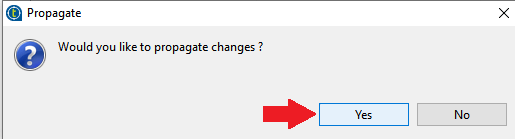

Step11:

- Then, click on the Yes button to propagate the above changes.

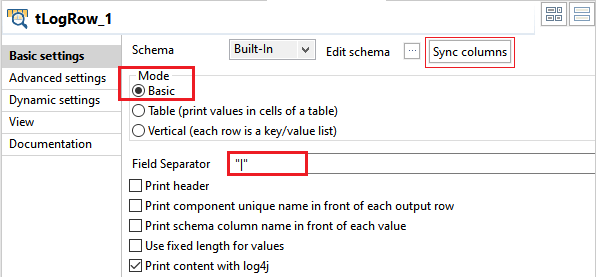

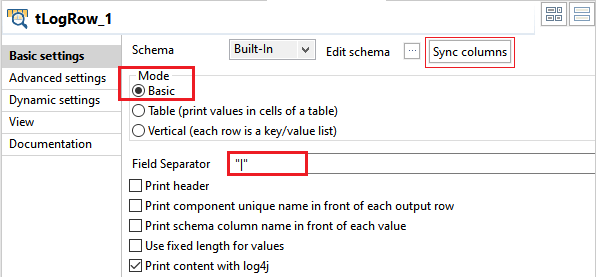

Step12:

Now, go to the tLogRow_1 component, click on the sync columns, and select the Mode in which we want to generate the row from our input.

For this, we will select the Mode as basic and give the "|" as a field separator as we can see in the below image:

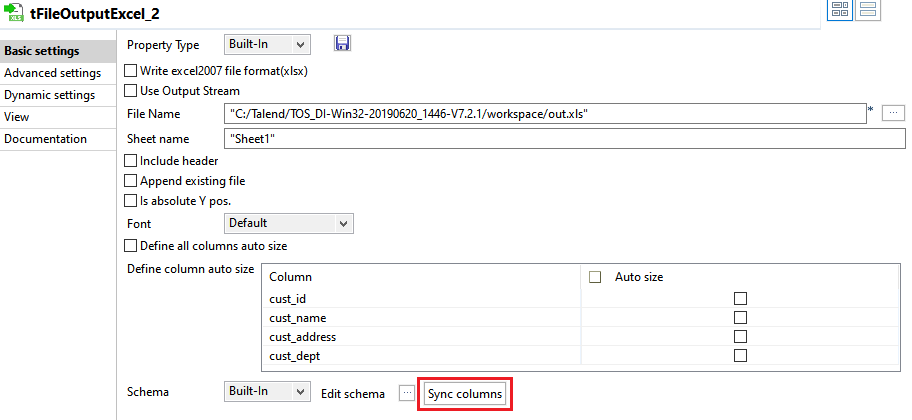

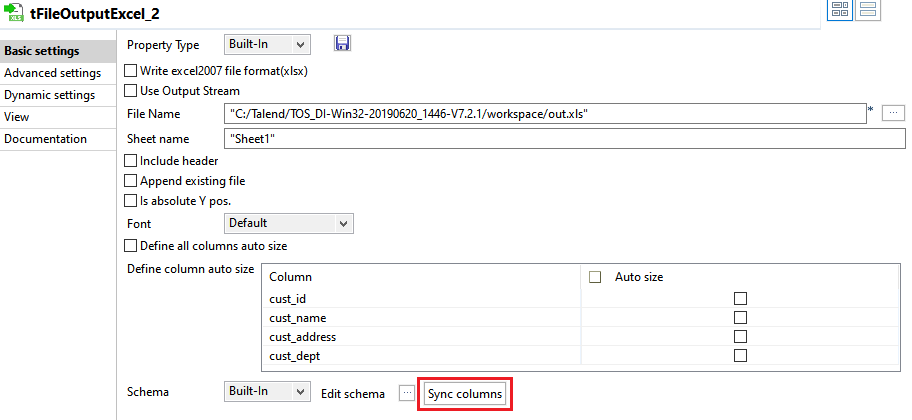

Step13:

After that, go to tFileOutputExcel components, and store it by giving the path.

And, in the Sheet Name field, provide the output Excel file sheet name as "Sheet1" then, Click on the Sync columns.

Execute the Job

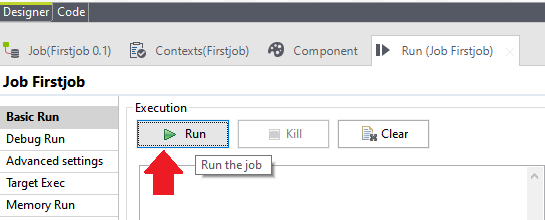

After completion of adding, connecting, and configuring the components, we will be ready to execute our first Talend Job.

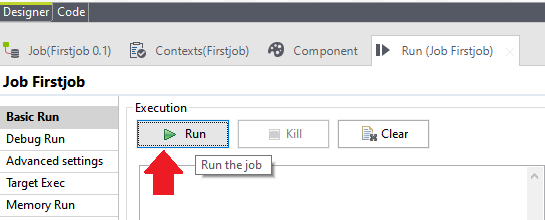

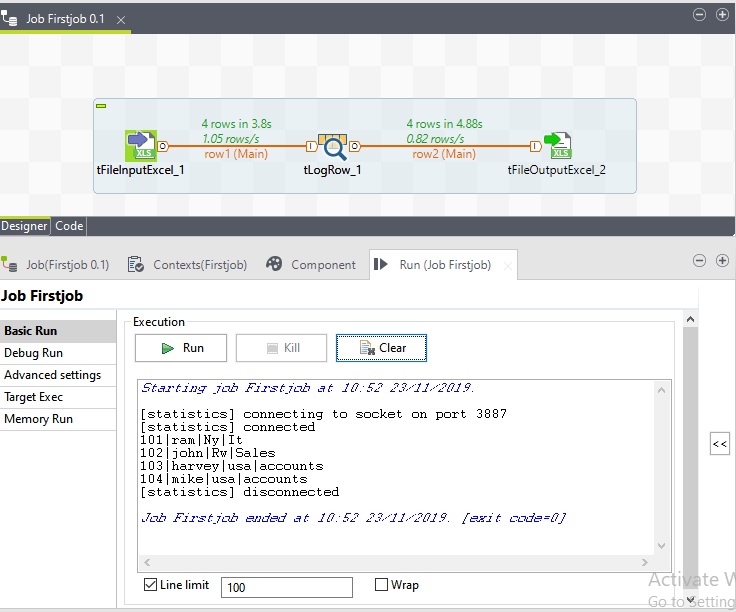

Step14:

To execute the Job, click on the Run button, as we can see in the below screenshot:

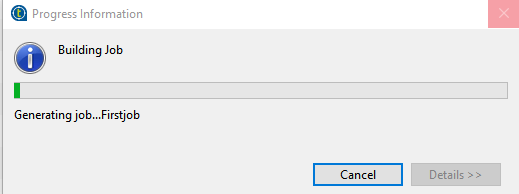

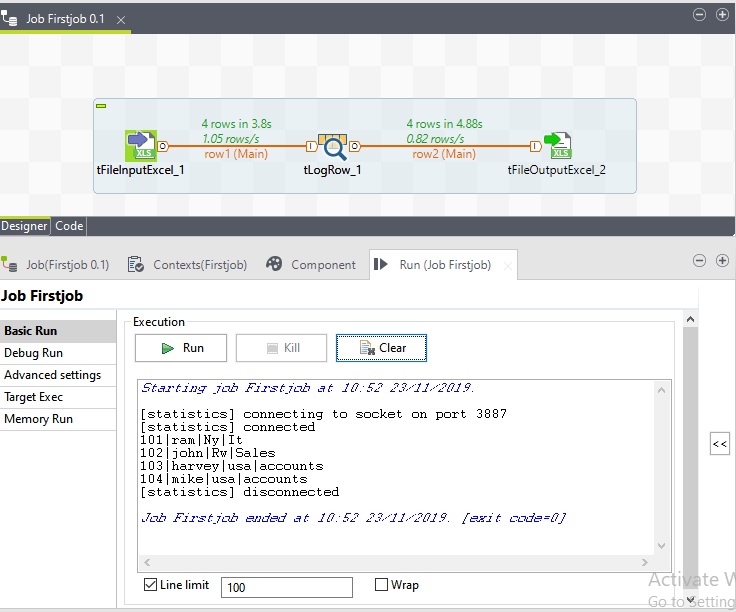

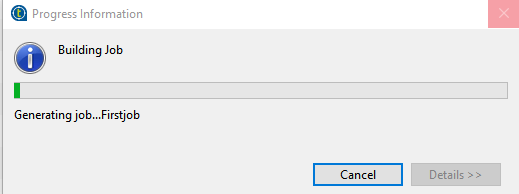

We can see that the execution of the FirstJob is getting started as we can see in the below screenshot:

And, we can also see that the output is coming within the basic Mode "|" separate.

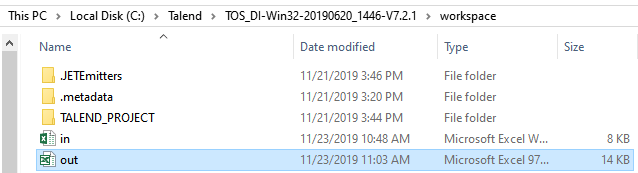

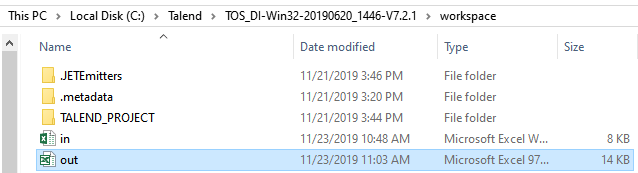

Our output file is saved in an Excel format in the given output path as we can see in the below screenshot:

Talend handling Job Execution:

In this section, we will learn how we handle Job execution.

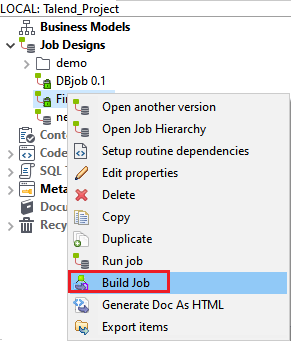

- To control the implementation of Job execution, we will consider the above example.

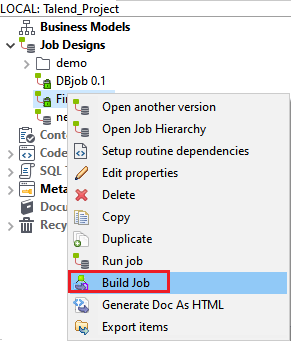

- Right-click on the Job in the Repository pane, and select the Build Job tab as we can see in the below image:

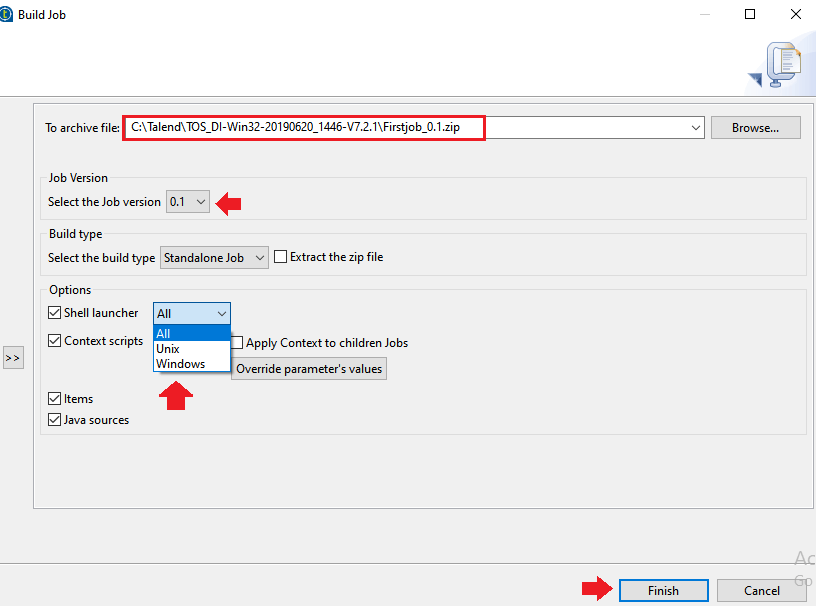

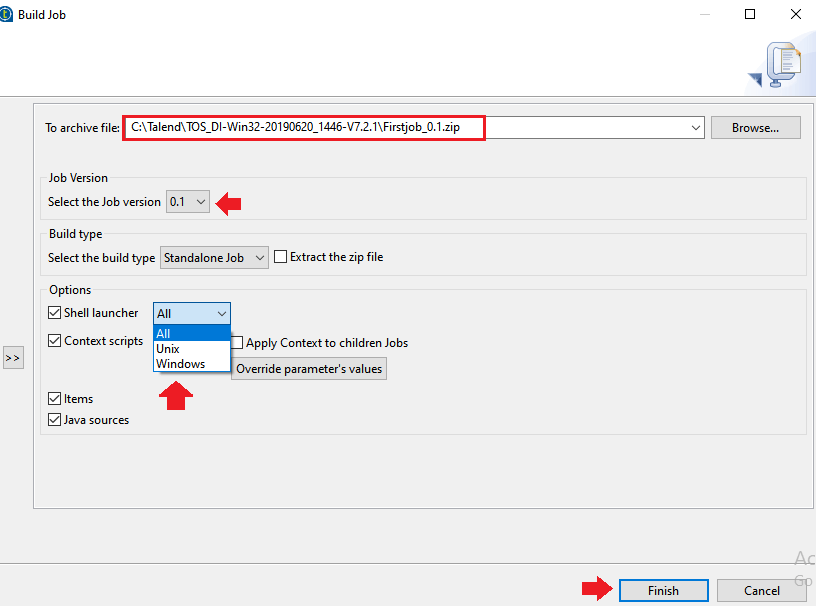

- After that, the build Job window will open, where we can give the path in the TO archive file field for the Job, change the version of the Job in the Job Version section, and we can also select the build type in the Build type

- Then, click on the Finish

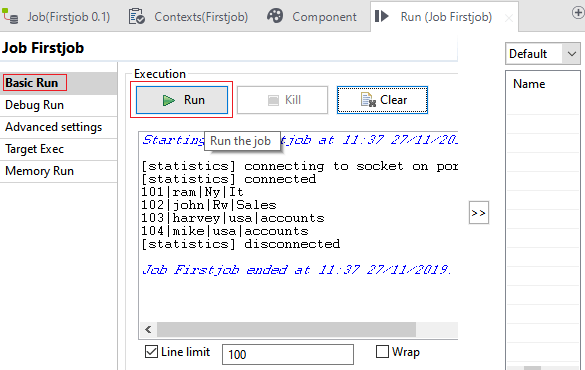

Run the Job in the Normal Mode

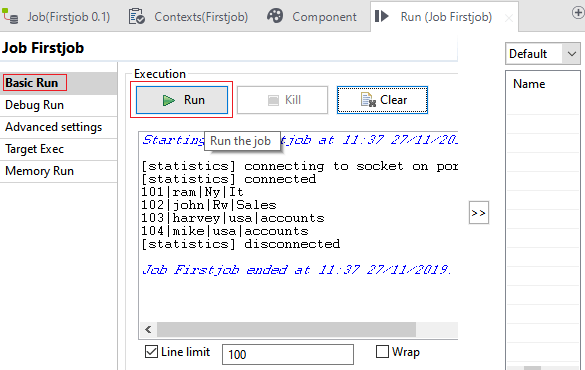

To run the Job in the normal Mode, follow the below process:

Select the Basic run option from the Run (Job FirstJob), and click on the Run button to start the execution, as we can see in the below screenshot:

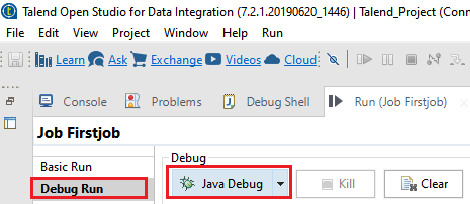

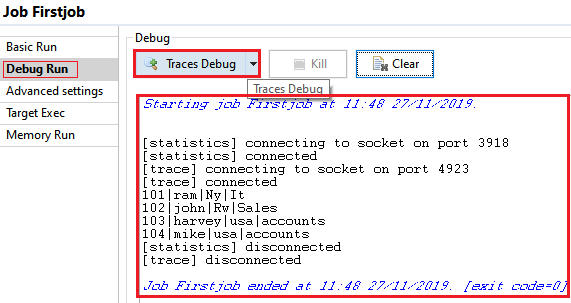

Running the Job in a debug Mode

To identify the possible bugs in the Job execution, we will run the Job in a debug Mode.

For running a Job in the debug Mode, we have two options available in Talend studio:

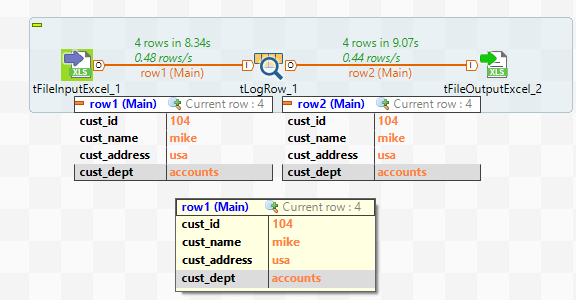

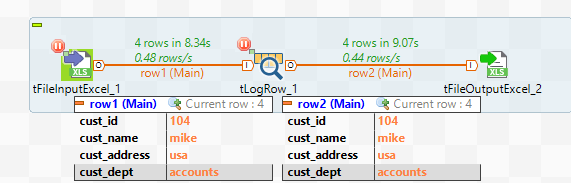

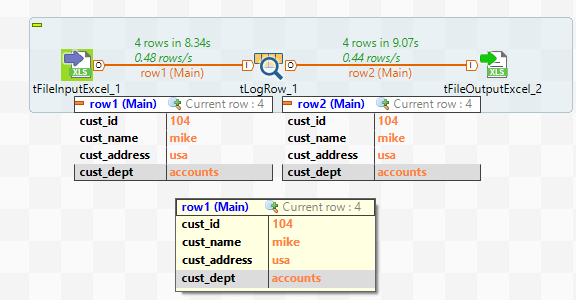

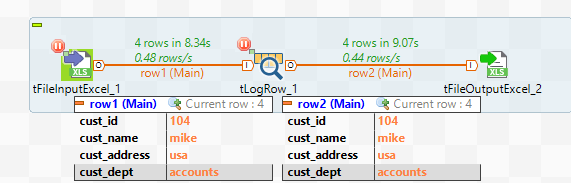

Trace debug

The trace feature allows us to monitor the data processing while running the Job in the Talend studio for the data integration platform.

It gives us a row by row view of the component behavior, and display the dynamic result to the row link on the design workspace window.

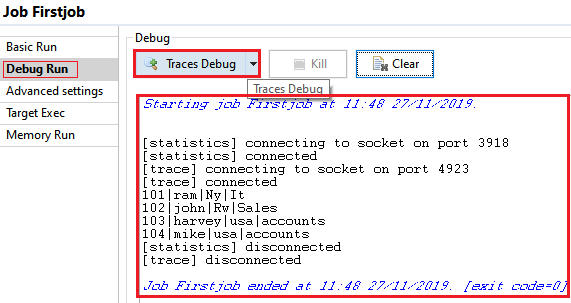

To access the Trace debug Mode, follow the below process:

- Click on the Run view to access it.

- Click the Debug Run tab to access the debug execution Modes, and select Trace debug to execute the Job in the trace Mode.

After debugging the Job, our design workspace window will look like this:

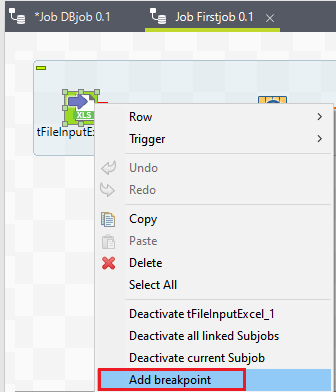

Java debug

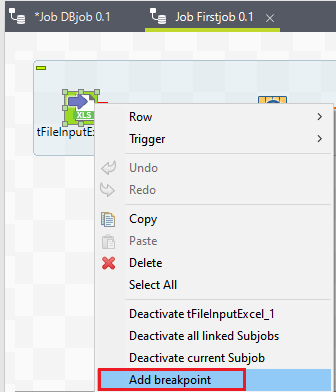

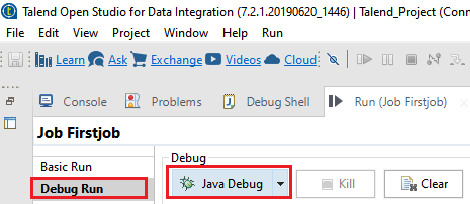

Before running our Job in java Debug Mode, the primary step is to add the breakpoints.

For adding the breakpoint into the components which we want to debug, follow the below process.

- Right-click on the component in the design workspace, and select the add breakpoint on the pop-up menu.

This will allow us to get the Job automatically to stop each breakpoint.

- We can run the Job step by step and check each breakpoint component for the expected behavior and their variable values.

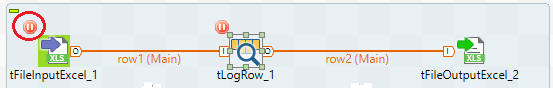

- And see that we have added the breakpoint to tFileInputExcel and tLogRow

- After adding the breakpoint, go to the debug button of the run panel and select the Java Debug

- We can notice from the below screenshot that the FirstJob is executing in the debug Mode according to the breakpoints.

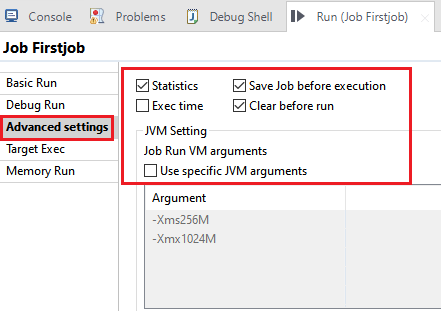

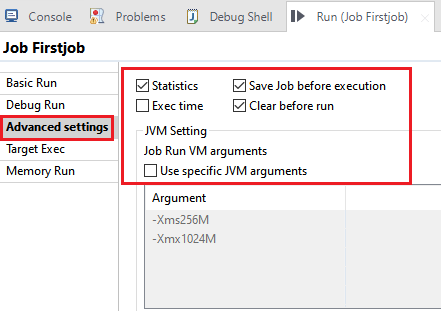

Advance Settings

The advance setting tab on the Run view had various advance execution settings, which are available to make the execution of the Job handler.

An advanced setting contains several features like statistics, exec time, save Job before execution, clear before run, and JVM setting. Each has its functionality which is as follows,

- Statistics: The statistics are used for displaying the rate of processing.

- Exec time: These features show the execution time in the console at the end of the execution.

- Save Job before Execution: It will automatically save the Job before the execution begins.

- Clear before run: This feature will clear all the results of a previous execution before re-executing the Job.

- JVM settings: The JVM settings help us to configure our java argument.

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now