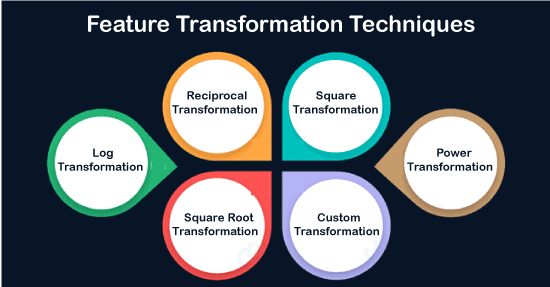

Feature Transformation in Data MiningData preprocessing is one of the many crucial steps of any data science project. As we know, our real-life data is often very unorganized and messy and without data preprocessing. First, we have to preprocess our data and then feed that processed data to our data science models for good performance. One part of preprocessing is Feature Transformation which we will discuss in this article. Feature Transformation is a technique we should always use regardless of the model we are using, whether it is a classification task or regression task, or be it an unsupervised learning model. What is Feature Transformation?Feature transformation is a mathematical transformation in which we apply a mathematical formula to a particular column (feature) and transform the values, which are useful for our further analysis. It is a technique by which we can boost our model performance. It is also known as Feature Engineering, which creates new features from existing features that may help improve the model performance. It refers to the algorithm family that creates new features using the existing features. These new features may not have the same interpretation as the original features, but they may have more explanatory power in a different space rather than in the original space. This can also be used for Feature Reduction. It can be done in many ways, by linear combinations of original features or using non-linear functions. It helps machine learning algorithms to converge faster. Why do we need Feature Transformations?Like Linear and Logistic regression, some data science models assume that the variables follow a normal distribution. More likely, variables in real datasets will follow a skewed distribution. By applying some transformations to these skewed variables, we can map this skewed distribution to a normal distribution to increase the performance of our models. As we know, Normal Distribution is a very important distribution in Statistics, which is key to many statisticians for solving problems in statistics. Usually, the data distribution in Nature follows a Normal distribution like - age, income, height, weight, etc. But the features in the real-life data are not normally distributed. However, it is the best approximation when we are unaware of the underlying distribution pattern. Feature Transformation TechniquesThe following transformation techniques can be applied to data sets, such as:

1. Log Transformation: Generally, these transformations make our data close to a normal distribution but cannot exactly abide by a normal distribution. This transformation is not applied to those features which have negative values. This transformation is mostly applied toright-skewed data. Convert data from the addictive scale to multiplicative scale, i.e., linearly distributed data. 2. Reciprocal Transformation: This transformation is not defined for zero. It is a powerful transformation with a radical effect. This transformation reverses the order among values of the same sign, so large values become smaller and vice-versa. 3. Square Transformation: This transformation mostly applies to left-skewed data. 4. Square Root Transformation: This transformation is defined only for positive numbers. This can be used for reducing the skewness of right-skewed data. This transformation is weaker than Log Transformation. 5. Custom Transformation: A Function Transformer forwards its X (and optionally y) arguments to a user-defined function or function object and returns this function's result. The resulting transformer will not be pickleable if lambda is used as the function. This is useful for stateless transformations such as taking the log of frequencies, doing custom scaling, etc. 6. Power Transformations: Power transforms are a family of parametric, monotonic transformations that make data more Gaussian-like. The optimal parameter for stabilizing variance and minimizing skewness is estimated through maximum likelihood. This is useful for modeling issues related to non-constant variance or other situations where normality is desired. Currently, Power Transformer supports the Box-Cox transform and the Yeo-Johnson transform. Box-cox requires the input data to be strictly positive (not even zero is acceptable), while Yeo-Johnson supports both positive and negative data. By default, zero-mean, unit-variance normalization is applied to the transformed data.

Next TopicVisual and Audio Data Mining

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share