The Great Tree List Recursion Problem in C++In this article, we will discuss the great tree list problem in C++ with several examples. Introduction:Think of a program that determines a number's factorial. This function takes a number N as an input and returns the factorial of N as a result. This function's pseudo-code will resemble: Example: Output: Sum of natural numbers from 1 to 5 is: 15 Explanation:

Recursion Types:There are mainly two different types of recursion:

1. Linear Recursion:A function that calls itself just once each time it executes is said to be linear recursive. A good illustration of linear recursion is the factorial function. The name "linear recursion" refers to the fact that a linearly recursive function takes a linear amount of time to execute. Example: Let's take a look at the pseudo-code below: Output: Countdown: 5 4 3 2 1 Explanation:

Let's define the temporal complexity's recurrence relation now: T(n) = T(n - 1) + K

According to this recurrence relation, the time needed to execute the function with parameter n is equal to the time needed for the recursive call with n - 1, plus a fixed time K for printing the value of n. In this scenario, the function is inadvertently called n times. Since this code's time complexity is O(n), the amount of time needed to complete it is linear in terms of n. The function receives n calls, and each one executes a fixed amount of work. 2. Tree Recursion:When you make a recursive call in your recursive case more than once, it is referred to as tree recursion. An effective illustration of Tree recursion is the fibonacci sequence. Tree recursive functions run in exponential time; they are not linear in their temporal complexity. Example: Take a look at the pseudo-code below, Output: Sum of tree values: 21 Explanation:

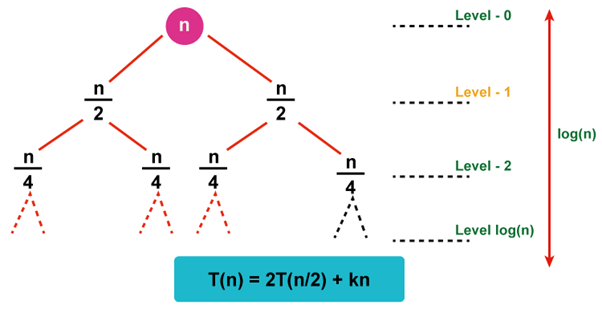

How does the recursion tree method work?The recursion tree strategy is used to solve recurrence relations such as T(N)=T(N/2)+N or the two we previously discussed(linear recursion and Tree recursion) in the types of recursion section. The usual approach used by these recurrent relations to deal with issues is divide and conquer. It takes time to integrate the answers to the smaller subproblems that are created when a larger problem is broken down into smaller subproblems. For instance, T(N)=2T(N/2)+O(N) is the recurrence relation for the Merge sort. The time needed to combine the answers to two subproblems with a combined size of T(N/2) is O(N), which is true at the implementation level as well. In the merge step of the Merge sort, we combine two sorted arrays to create a new sorted array in linear time. For instance, the recurrence relation for binary search is T(N)=T(N/2)+1, and we are aware that each repetition of a binary search cuts the search space in half. Once the outcome is determined, we exit the function. Because this is a constant time operation, + 1 +1 is added to the recurrence relation. Take the recurrence relation T(n)=2T(n/2)+Kn into consideration. Kn denotes the amount of time required to combine the answers to n/2-dimensional subproblems. Let's depict the recursion tree for the aforementioned recurrence relation.

We may draw a few conclusions from studying the recursion tree above, including:

How to Use a Recursion Tree to Solve Recurrence Relations:The cost of the subproblem is the amount of time needed to solve it in the recursion tree approach. Therefore, the time needed to solve a subproblem is all that is meant when the word "cost" is found connected with the recursion tree. Using the recursion tree approach, there are a few stages that must be taken to solve a recurrence relation. Including,

Conclusion:

Next TopicTimer implementation in C++

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share