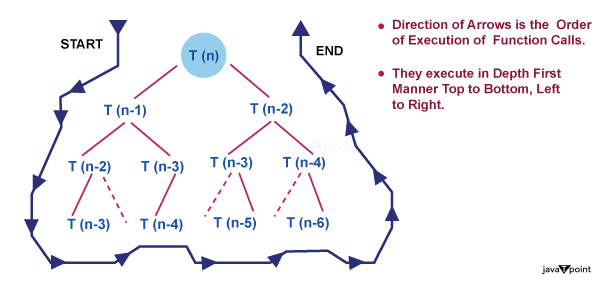

Recursion Tree MethodRecursion is a fundamental concept in computer science and mathematics that allows functions to call themselves, enabling the solution of complex problems through iterative steps. One visual representation commonly used to understand and analyze the execution of recursive functions is a recursion tree. In this article, we will explore the theory behind recursion trees, their structure, and their significance in understanding recursive algorithms. What is a Recursion Tree?A recursion tree is a graphical representation that illustrates the execution flow of a recursive function. It provides a visual breakdown of recursive calls, showcasing the progression of the algorithm as it branches out and eventually reaches a base case. The tree structure helps in analyzing the time complexity and understanding the recursive process involved. Tree StructureEach node in a recursion tree represents a particular recursive call. The initial call is depicted at the top, with subsequent calls branching out beneath it. The tree grows downward, forming a hierarchical structure. The branching factor of each node depends on the number of recursive calls made within the function. Additionally, the depth of the tree corresponds to the number of recursive calls before reaching the base case. Base CaseThe base case serves as the termination condition for a recursive function. It defines the point at which the recursion stops and the function starts returning values. In a recursion tree, the nodes representing the base case are usually depicted as leaf nodes, as they do not result in further recursive calls. Recursive CallsThe child nodes in a recursion tree represent the recursive calls made within the function. Each child node corresponds to a separate recursive call, resulting in the creation of new sub problems. The values or parameters passed to these recursive calls may differ, leading to variations in the sub problems' characteristics. Execution Flow:Traversing a recursion tree provides insights into the execution flow of a recursive function. Starting from the initial call at the root node, we follow the branches to reach subsequent calls until we encounter the base case. As the base cases are reached, the recursive calls start to return, and their respective nodes in the tree are marked with the returned values. The traversal continues until the entire tree has been traversed. Time Complexity AnalysisRecursion trees aid in analyzing the time complexity of recursive algorithms. By examining the structure of the tree, we can determine the number of recursive calls made and the work done at each level. This analysis helps in understanding the overall efficiency of the algorithm and identifying any potential inefficiencies or opportunities for optimization. Introduction

Recursion TypesGenerally speaking, there are two different forms of recursion:

Linear Recursion

Tree Recursion

What Is Recursion Tree Method?

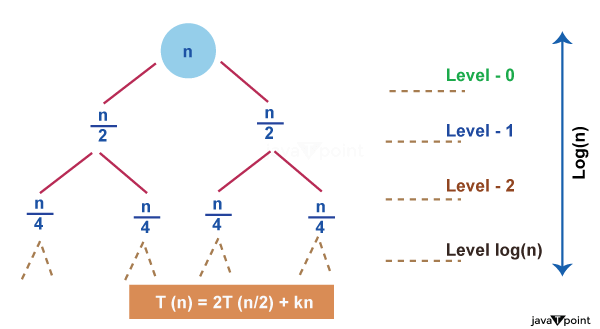

We may draw a few conclusions from studying the recursion tree above, including 1. The magnitude of the problem at each level is all that matters for determining the value of a node. The issue size is n at level 0, n/2 at level 1, n/2 at level 2, and so on. 2. In general, we define the height of the tree as equal to log (n), where n is the size of the issue, and the height of this recursion tree is equal to the number of levels in the tree. This is true because, as we just established, the divide-and-conquer strategy is used by recurrence relations to solve problems, and getting from issue size n to problem size 1 simply requires taking log (n) steps.

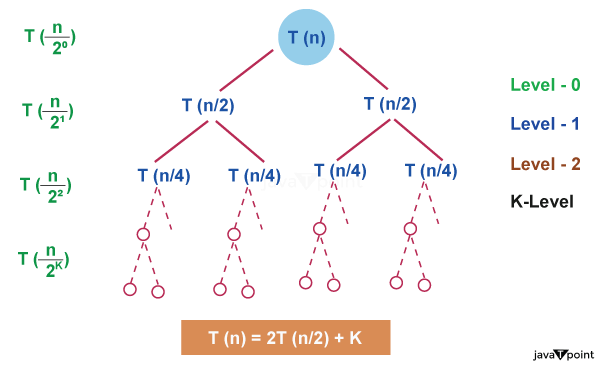

log(16) base 2 log(2^4) base 2 4 * log(2) base 2, since log(a) base a = 1 so, 4 * log(2) base 2 = 4 3. At each level, the second term in the recurrence is regarded as the root. Although the word "tree" appears in the name of this strategy, you don't need to be an expert on trees to comprehend it. How to Use a Recursion Tree to Solve Recurrence Relations?The cost of the sub problem in the recursion tree technique is the amount of time needed to solve the sub problem. Therefore, if you notice the phrase "cost" linked with the recursion tree, it simply refers to the amount of time needed to solve a certain sub problem. Let's understand all of these steps with a few examples. Example Consider the recurrence relation, T(n) = 2T(n/2) + K Solution The given recurrence relation shows the following properties, A problem size n is divided into two sub-problems each of size n/2. The cost of combining the solutions to these sub-problems is K. Each problem size of n/2 is divided into two sub-problems each of size n/4 and so on. At the last level, the sub-problem size will be reduced to 1. In other words, we finally hit the base case. Let's follow the steps to solve this recurrence relation, Step 1: Draw the Recursion Tree

Step 2: Calculate the Height of the Tree Since we know that when we continuously divide a number by 2, there comes a time when this number is reduced to 1. Same as with the problem size N, suppose after K divisions by 2, N becomes equal to 1, which implies, (n / 2^k) = 1 Here n / 2^k is the problem size at the last level and it is always equal to 1. Now we can easily calculate the value of k from the above expression by taking log() to both sides. Below is a more clear derivation, n = 2^k

So the height of the tree is log (n) base 2. Step 3: Calculate the cost at each level

Step 4: Calculate the number of nodes at each level Let's first determine the number of nodes in the last level. From the recursion tree, we can deduce this

So the level log(n) should have 2^(log(n)) nodes i.e. n nodes. Step 5: Sum up the cost of all the levels

The cost of the last level is calculated separately because it is the base case and no merging is done at the last level so, the cost to solve a single problem at this level is some constant value. Let's take it as O (1). Let's put the values into the formulae,

If you closely take a look to the above expression, it forms a Geometric progression (a, ar, ar^2, ar^3 ...... infinite time). The sum of GP is given by S(N) = a / (r - 1). Here is the first term and r is the common ratio.

Next TopicMaster Method

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share