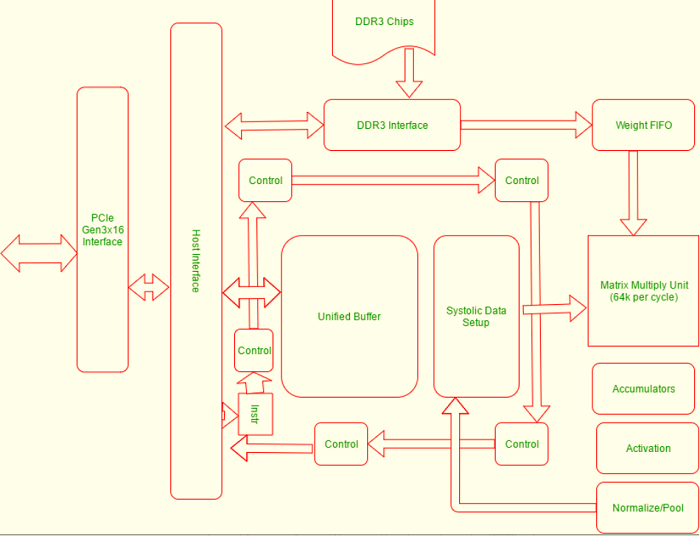

Tensor Processing UnitsMachine learning is becoming more important and relevant every day. The traditional microprocessors are unable to handle it effectively, whether it's training or neural network processing. The GPUs' parallel architecture, which allows for rapid graphic processing, proved to be more efficient than CPUs but was still somewhat limited. Google created an integrated circuit for AI accelerators that would be used in its TensorFlow AI framework to address this problem. The device was named TPU (Tensor Process Unit). This chip is compatible with the Tensorflow Framework. What is TensorFlow Framework?TensorFlow, is an open-source library which is created by Google for internal use. It is used primarily in dataflow programming and machine learning. TensorFlow computations can be expressed as stateful graphs of data flow. TensorFlow has been named after the operations these neural networks perform on multidimensional arrays of data. These arrays are called "tensors." TensorFlow can be used on Linux distributions, Windows and macOS. TPU ArchitectureThe following diagram shows the physical architecture of units in a TPU.

These computational resources are part of the TPU:

Five major high-level instruction sets have been created to manage the operation of the resources. These are the five major high-level instruction sets.

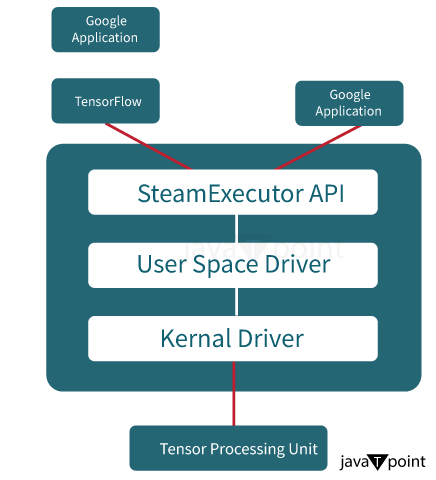

Here is the app stack that Google uses to maintain TensorFlow or TPU.

The Advantages of TPUThese are just a few of the many benefits that TPUs offer:

When should we use a TPU?These are the best cases for which TPUs can be used in machine learning.

Next Topic#

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share