Python Tutorial

Python OOPs

Python MySQL

Python MongoDB

Python SQLite

Python Questions

Plotly

Python Tkinter (GUI)

Python Web Blocker

Python MCQ

Related Tutorials

Python Programs

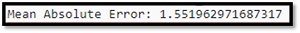

How can Tensorflow be used with Abalone Dataset to Build a Sequential ModelWe now address challenging challenges in a variety of industries in a very different way thanks to artificial intelligence and machine learning. One important technique we employ is deep learning. To uncover intricate relationships and patterns in data, it makes use of specialized networks. We can create and educate these networks with the aid of the well-known Google program TensorFlow. In this post, we'll lay down a step-by-step strategy using TensorFlow and the Abalone dataset. Understanding the Abalone Dataset:The Abalone dataset is a classic dataset used in machine learning for regression tasks. It involves predicting the age of abalones, a type of marine mollusk, based on certain physical characteristics. These characteristics include attributes such as length, diameter, height, and various measurements of weight, such as whole weight, shucked weight, and viscera weight. The dataset can be found on the UCI Machine Learning Repository. The Abalone dataset will be used to create a sequential model that can estimate an abalone's age from its physical characteristics. TensorFlow will be used to build a neural network model that can recognize the underlying patterns in the data in order to do this. Setting up TensorFlow:Make sure TensorFlow is installed in your system before you start constructing the model. Using pip, you can install it: Code: Building the Sequential Model:Creating a sequential model in TensorFlow entails specifying the order of the layers through which the data will pass. Input, hidden, and output layers are a few examples of these layers. For creating and training neural networks, a high-level TensorFlow API called the Keras API will be used. Here is a step-by-step instruction on how to use the Abalone data and TensorFlow to create syncing models: Data Preprocessing: Start by loading the Abalone dataset and performing necessary preprocessing steps such as feature scaling, splitting into training and testing sets, and converting labels (ages) into a suitable format for regression tasks. Importing Libraries: Import the required libraries including TensorFlow and relevant layers from the Keras module. Defining the Model: Initialize a sequential model using tf.keras.Sequential(). Then, add a series of layers using the .add() method. For example, you can start with a densely connected input layer using tf.keras.layers.Dense(). Configuring Layers: Configure the properties of each layer such as the number of units/neurons, activation functions, and input dimensions. For the hidden layers, you can experiment with different activation functions like ReLU. Output Layer: The output layer should have a single neuron since we are predicting a single continuous value (abalone age). Depending on the nature of your regression task, you might not need an activation function for the output layer. Compiling the Model: Compile the model using the .compile() method. Specify the optimizer, loss function, and metrics to monitor during training. For regression tasks, 'mean squared error' (MSE) is a commonly used loss function. Training the Model: Train the model on the preprocessed training data using the .fit() method. Provide the training data, labels, batch size, number of epochs, and validation data if applicable. Evaluating the Model: After training, evaluate the model's performance on the testing data using the .evaluate() method. This will provide insights into how well the model predicts abalone ages on unseen data. Making Predictions: Use the trained model to make predictions on new or unseen data using the .predict() method. Code: Output:

Conclusion:In this article, we've walked through the process of using TensorFlow to build a sequential neural network model for predicting the age of abalones based on their physical attributes. We covered the steps of data preprocessing, model initialization, layer configuration, compilation, training, evaluation, and prediction. TensorFlow's flexibility and the simplicity of the Keras API make it a powerful tool for building complex neural network architectures to solve various machine learning tasks, including regression tasks like the one demonstrated with the Abalone dataset. As you continue your journey with deep learning, remember that experimentation, parameter tuning, and a solid understanding of your data are essential for achieving the best model performance.

Next TopicHollow Pyramid Pattern in Python

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share