Introduction to PyCaret

I'd like to discuss PyCaret, a new Python machine-learning library, in this article. PyCaret is touted as a low-code resource for data scientists that aims to shorten a machine learning experiment's "hypothesis to insights cycle time." It makes it possible for data scientists to complete experiments quickly and effectively. With just a few lines of code, you can carry out intricate machine-learning tasks with the help of the PyCaret library.

Moez Ali, a data scientist, created PyCaret, and the project began in the summer of 2019. The emerging role of citizen data scientists, who complement professional data scientists and bring their expertise and unique skills to analytics-driven tasks, sparked his interest in the project. However, PyCaret is great for resident information researchers because of its straightforwardness, convenience, and low-code climate; proficient information researchers can likewise involve it as a feature of their AI work processes to assist with building fast models rapidly and productively. Ali told me that PyCaret isn't straightforwardly connected with the caret bundle in R; however, it was animated via caret maker Dr. Max Kuhn's work in R. The name Caret is short for Characterization and Relapse Preparing.

"In correlation with the other open-source AI libraries, PyCaret is another low-code library that can be utilized to supplant many lines of code with few words," said PyCaret maker Moez Ali. " Experiments become exponentially faster and more effective as a result.

In April 2020, the initial release of PyCaret 1.0.0 was made available, and on August 28, 2020, the most recent version, 2.1, was made available.

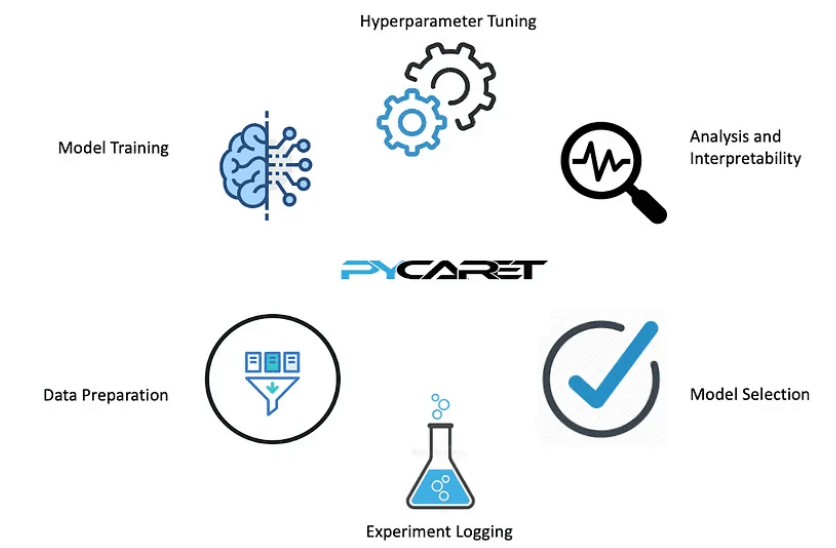

PyCaret is a powerful Python library that simplifies and streamlines the machine-learning workflow from start to finish. It's especially valuable for information researchers, investigators, and AI professionals who need to productively assemble, look at, and send AI models without jumping into the complexities of coding each step. Without going into the specifics of the code, here is an overview of PyCaret:

- AutoML(automated machine learning): AutoML, or machine learning, is the foundation upon which PyCaret is built. Data preprocessing, feature selection, model training, hyperparameter tuning, and model evaluation are all parts of the machine learning process that AutoML tools aim to automate.

- Friendly User Interface: PyCaret has an interface that is easy to use and understand for various machine-learning tasks. It works on the most common way of stacking and planning information, envisioning information circulations, and performing fundamental information preprocessing steps like dealing with missing qualities and encoding straight-out factors.

- Model Preparation and Determination: One of PyCaret's essential highlights is its ability to prepare and think about different AI models easily. It permits clients to choose from many calculations, like relapse, order, bunching, and oddity location, and it naturally applies these calculations to the information. The models are then ranked and compared by PyCaret using a variety of performance metrics.

- Hyperparameter Tuning: PyCaret optimizes the selected machine learning models through automated hyperparameter tuning. This interaction includes tracking down the best blend of model hyperparameters to work on model execution.

- Interpretability of the Model: PyCaret makes it simpler to comprehend how a machine learning model makes predictions by providing tools for model interpretation. To assist users in gaining insights into their models, it generates feature importance plots, SHAP (Shapley Additive explanations) values, and other interpretability metrics.

- Model Arrangement: When a palatable model is distinguished, PyCaret considers simple model organization. Clients can send their models in true applications, like web applications, APIs, or clump-handling pipelines.

- Adaptability and Reproducibility: PyCaret is built to handle big datasets and can be scaled to handle bigger data problems. It also makes replicating and sharing experiments easier by keeping track of all the preprocessing steps, model configurations, and results.

- Extensive documentation and support from the community: To assist users in understanding and resolving issues, PyCaret provides extensive documentation, tutorials, and a community forum. The people group is dynamic and frequently offers help and direction to rookies.

- Alignment with Other Libraries: To use the machine learning pipeline's capabilities, PyCaret can be integrated with well-known Python libraries, such as sci-kit-learn, XGBoost, LightGBM, and others.

- Data Visualization: Users of PyCaret can gain insight into their data with the help of various data visualization tools. It gives intelligent plots and graphs to imagine highlight conveyances, connections, and model execution measurements. For data exploration and model selection, these visualizations are invaluable.

- Data Preprocessing: PyCaret works on information preprocessing assignments like dealing with missing qualities, encoding straight-out factors, and scaling highlights. Users can concentrate more on the model-building process because it automates these steps.

- Ensemble Method: PyCaret upholds outfit strategies, consolidating various AI models to work on general execution. Clients can, without much of a stretch, make troupe models like packing, supporting, and stacking to improve prescient exactness.

- Analyses of Time Series: Time-series analysis has been added to PyCaret, making it suitable for temporal data-based forecasting and predictive modeling tasks. It incorporates highlights like time-series cross-approval and programmed slack determination.

- Natural Language Processing (NLP): PyCaret has extended its abilities to incorporate NLP assignments. Clients can perform message preprocessing, highlight designing, and construct message grouping models for applications like feeling investigation or message arrangement.

Why Utilize PyCaret?

PyCaret is a useful library that helps start-up businesses save money on hiring a team of data scientists and makes machine learning tasks easier for citizen data scientists. The hypothesis is - that less information researchers utilizing PyCaret can rival a bigger group utilizing customary instruments. Further, this library has assisted resident information researchers and assisted novices who need to begin investigating information science; however, they have minimal earlier information in this field.

PyCaret is a Python covering a few AI libraries and systems, including scikit-learn, XGBoost, Microsoft LightGBM, spaCy, and others.

The target group for PyCaret is:

- Data scientists with a lot of experience who want to work more efficiently

- Resident information researchers who can profit from a low-code AI arrangement

- Students of data science (I intend to incorporate PyCaret into my forthcoming "Introduction to Data Science" classes)

- Information science experts and specialists engaged with building MVP renditions of tasks.

Using PyCaret

Let's take a quick look at some important functions of PyCaret:

- The compare_models function uses cross-validation to evaluate performance metrics and trains all models in the model library with default hyperparameters. Metrics used to classify Precision, recall, accuracy, AUC, F1, Kappa, and MCC Regression metrics are R2, RMSLE, MAPE, MSE, RMSE, and MAE.

- The create_model function uses cross-validation to evaluate performance metrics and train a model with default hyperparameters.

- The tune_model function adjusts the model's hyperparameter using an estimator. It employs random grid search and has fully customizable tuning grids that have been pre-defined.

- After receiving a trained model object, the ensemble_model function returns a table containing common evaluation metrics' k-fold cross-validated scores.

- predict_model is a prediction and inference tool.

- plot_model - used to assess the presentation of the prepared AI model.

- Utility capabilities - valuable utility capabilities while dealing with your AI explore different avenues regarding PyCaret.

- Experiment logging: When you run your machine learning code, PyCaret embeds the MLflow tracking component as a backend API and UI for logging parameters, code versions, metrics, and output files for later analysis.

New elements in the 2.1 delivery include:

- On a GPU, you can now adjust the hyperparameters of various models: XGBoost, LightGBM, and Catboost.

- The deploy_model function, previously only available on AWS, has expanded the functionality of deploy-trained models to include deployment on Microsoft Azure and GCP.

- The plot_model capability presently incorporates a new "scale" boundary that you can use to control the goal and produce great plots for your explanatory information perception needs.

- With the new custom_scorer parameter in the tune_model function, user-defined custom loss functions can now be used.

- The Boruta algorithm is now included in PyCaret for improved feature engineering. Boruta was first published as an R package in September 2010 in the Journal of Statistical Software. Since then, it has been ported to Python.

Getting everything rolling with PyCaret

PyCaret accompanies a progression of very much-created instructional exercises (each with its own GitHub repo) that cover numerous significant areas of improvement for information researchers. The tutorials cover NLP, clustering, anomaly detection, classification, regression, and association rule mining. Additionally, several video tutorials are available, making it fairly simple to become familiar with these powerful tools.

In summary, PyCaret is an important device for information researchers and AI specialists who need to smooth out the AI cycle, from information planning to display organization. It offers a productive and easy-to-use method for exploring different avenues regarding different AI models and strategies without the requirement for broad coding, making it a brilliant decision for the two fledglings and experienced experts in the field.

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now