Design a data structure that supports insert, delete, search and getRandom in constant time.Runtime efficiency is critical for scalability when designing data structures to support essential operations like inserting, deleting, searching, and retrieving elements. As data structures grow to hold more and more details, keeping these operations fast becomes challenging. While primary data structures like arrays or linked lists allow some efficient operations, they fail when it comes to others, especially as they grow huge. An optimum solution is a hybrid data structure that combines the strengths of simpler constructs to enable swift constant-time performance for not just one but all the four critical operations required. In this article, we will discuss a hybrid data structure that pairs a hash table with a dynamic array that can support inserts, deletes, searches and random fetches on massive datasets in guaranteed O(1) time. Developers can craft data containers tailor-made for speed and scale by understanding the synergies between these interlinked formats. The key highlights are - the need for efficient data structures that can handle big data, the limitations of more superficial data structures, proposing a hash table + array combination for achieving efficiencies in all required operations, and how this hybrid format leverages the strengths of the two constructs. How to Design the Data Structure?Hash TableThe hash table can be implemented in Python using a dictionary. Dictionaries provide efficient key-value lookups, inserts and deletes, making them ideal for realizing hash tables. We can define a dictionary that maps each key to an index value. This index will then link to the element's position in the array. Dictionary operations like setting/getting elements require just O(1) time. The dictionary size would also ideally be proportional to N (load factor considerations aside) to store N elements. Resizing the underlying dictionary container when it starts to fill up allows the runtime promise to be kept. The exact mapping of keys to array indices depends on the hash function used. The hash function should provide a uniform distribution of hashes to minimize collisions. Popular choices would include MD5, SHA256 or any pre-existing hashing library. ArrayPython lists serve very well as dynamic arrays. Appending to, inserting in, deleting from and accessing list elements takes time. This meets all our requirements. The list will store every element inserted into our data structure. By linking indices from the hash table to positions in this list, elements can be efficiently accessed or manipulated at will. We can start with a list initialized to an appropriate capacity and allow it to expand as needed when it starts to fill up. Choosing the right initial size and expansion parameters can impact performance. Knowing Operations:Insert OperationThe insert operation allows the addition of new elements to the data structure. Specifically, it enables the following functionality:

The time complexity for insertion depends on the data structure type and its implementation. The goal is to achieve O(1) constant time inserts irrespective of data structure size. Delete OperationThe delete operation facilitates the removal of existing elements from the data structure. This entails a few essential tasks:

As with insertion, deletes aim for O(1) time complexity, again independent of the total elements present. Search OperationThe search functionality allows checking whether a given element currently exists within the data structure. The following are the broad steps involved:

Achieving O(1) lookup time is desirable, though it is still considered efficient up to O(log N) via binary search trees. getRandom OperationThe getRandom operation fetches an arbitrary element from the data structure uniformly at random. The main steps are:

Getting a random element also targets O(1) time, similar to the above operations. Python Implementation for this DSThis program implements a randomized data structure that supports efficient search, insertion, deletion and random access operations. The structure combines a hash table and dynamic array to store elements randomly, allowing fast lookups and accesses. Some key capabilities this data structure provides:

The random ordering and quick accesses make it suitable for applications needing randomness, like shuffling playlists, games, sampling, and more. Algorithm Steps

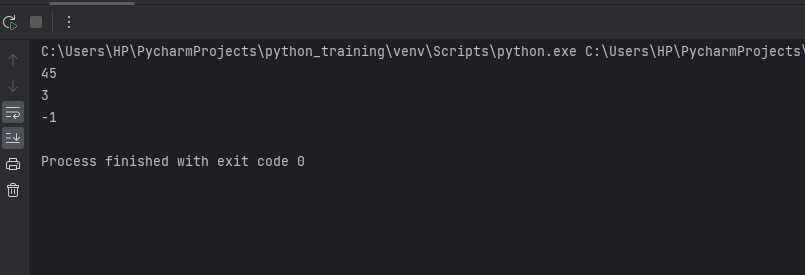

Output:

Explanation:The RandomizedDS class implements a data structure that stores elements randomly and allows efficient search, insertion, removal, and random access. Here is a step-by-step explanation:

Conclusion:In simpler terms, we saw how mixing two primary data structures - hash tables and arrays - can give us a customized container that performs excellent across the board. By exploiting what each one does best, we get a fast all-rounder. Hash tables use clever numbering to access data entries directly. Arrays place items sequentially to allow easy inserts and random picks. Combining them covers holes in each one's capabilities. The joint structure gives us speedy addition, removal, finding, and randomly getting elements, even in huge collections. The techniques we need are also simple to grasp at a high level. Hash functions map keys to array spots in a balanced manner. Reserving extra space avoids crowding, which slows things down. While coding natural systems using these ideas adds complexity, the concepts are intuitive. We specifically looked at guidelines for building a customizable data store in Python. Its standard dictionary and list types already supply ingredients required for high efficiency. Simply glueing them together correctly allows versatile structures to be crafted with little effort. In the data analytics domain, such customizable containers form building bricks. Having strong guarantees of speed despite vast data sizes unlocks scalable architectures. Innovative products benefit end users by providing responsive storage, retrieval and sharing of information. Understanding these basic techniques to create data structures tailored to needs is critical for engineers working on analytics pipelines. The article showed how combining complementary approaches gives customizable and efficient solutions that are more significant than the sum of parts. Analytics systems serving real-world demands can be built using these Lego blocks.

Next TopicFind the largest subarray with 0 sum.

|

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share