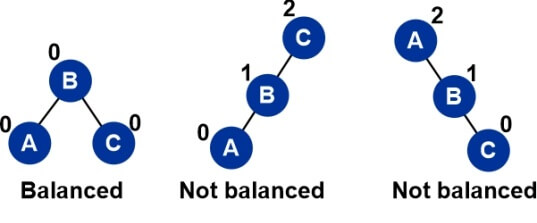

Properties of AVL TreesIn 1962, GM Adelson-Velsky and EM Landis created the AVL Tree. To honors the people who created it, the tree is known as AVL. The definition of an AVL tree is a height-balanced binary search tree in which each node has a balance factor that is determined by deducting the height of the node's right sub tree from the height of its left sub tree. If each node's balance factor falls between -1 and 1, the tree is considered to be balanced; otherwise, the tree needs to be balanced. Balance Factor

Why use AVL Trees?The majority of BST operations, including search, max, min, insert, delete, and others, require O(h) time, where h is the BST's height. For a skewed Binary tree, the cost of these operations can increase to O(n). We can provide an upper bound of O(log(n)) for all of these operations if we make sure that the height of the tree stays O(log(n)) after each insertion and deletion. An AVL tree's height is always O(log(n)), where n is the tree's node count. Operations on AVL TreesDue to the fact that, AVL tree is also a binary search tree hence, all the operations are conducted in the same way as they are performed in a binary search tree. Searching and traversing do not lead to the violation in property of AVL tree. However, the actions that potentially break this condition are insertion and deletion; as a result, they need to be reviewed.

Insertion in AVL TreesWe must add some rebalancing to the typical BST insert procedure to ensure that the provided tree stays AVL after each insertion. The following two simple operations (keys (left) key(root) keys(right)) can be used to balance a BST without going against the BST property.

The steps to take for insertion are:

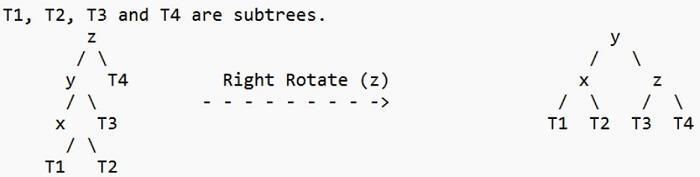

The procedures to be carried out in the four circumstances indicated above are listed below. In every instance, we only need to rebalance the subtree rooted with z, and the entire tree will be balanced as soon as the height of the subtree rooted with z equals its pre-insertion value (with the proper rotations). 1. Left Left Case

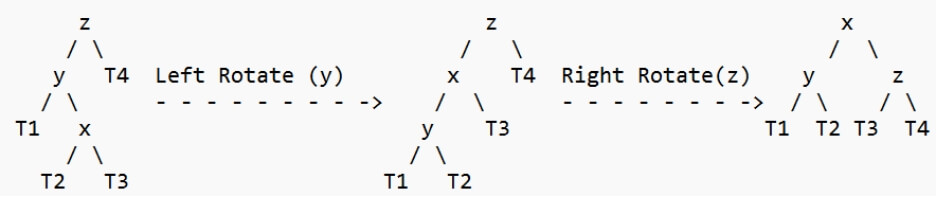

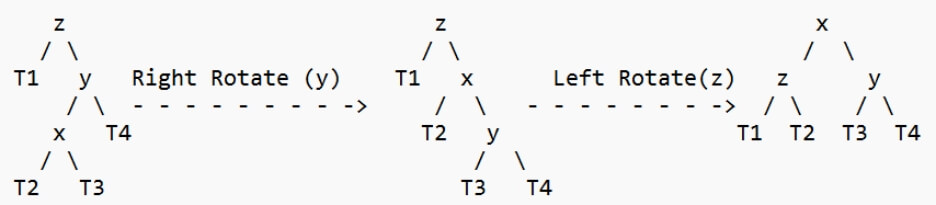

2. Left Right Case

3. Right Right Case

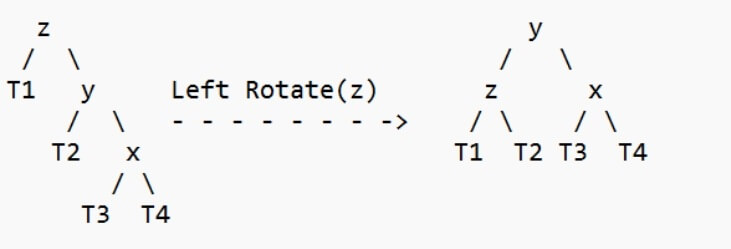

4. Right Left Case

Advantages of AVL Trees

Disadvantages of AVL Trees

AVL trees, which were developed specifically to balance imbalanced binary trees used in database indexing, can be said to have served a specific purpose. The only difference between them and binary search trees is that in this case, we need to maintain the balance factor, which means that the data structure should remain a balanced tree as a result of various operations, which are accomplished by using the AVL tree Rotations that we previously learned about. Properties of AVL TreeProperty 1: The most number of nodes an AVL tree of height H might have = 2H+1 - 1 Maximum possible number of nodes in AVL tree of height-3 = 23+1 - 1 = 16 - 1 = 15 Thus, in AVL tree of height-3, maximum number of nodes that can be inserted = 15 Property 2: A recursive relation determines the minimum number of nodes in an AVL Tree of height H. N(H) = N(H-1) + N(H-2) + 1 Base conditions for this recursive relation are- N(0) = 1 N(1) = 2 Property 3: The AVL Tree's smallest feasible height with N nodes = [log2N] Minimum possible height of AVL Tree using 8 nodes = [log28] = [log223] = [3log22] = [3] = 3 Property 4: Using recursive relation, the maximum height of an AVL tree with N nodes is computed. N(H) = N(H-1) + N(H-2) + 1 Base conditions for this recursive relation are- N(0) = 1 N(1) = 2 NOTE:

Problems on AVL TreesProblem 1: Find the bare minimum of nodes needed to build an AVL tree with height = 3. Solution- As is well known, a recursive relation determines the least number of nodes in an AVL tree of height H. N(H) = N(H-1) + N(H-2) + 1 where N(0) = 1 and N(1) = 2 Step-01: Substituting H = 3 in the recursive relation, we get- N(3) = N(3-1) + N(3-2) + 1 N(3) = N(2) + N(1) + 1 N(3) = N(2) + 2 + 1 (Using base condition) N(3) = N(2) + 3 …………(1) To solve this recursive relation, we need the value of N(2). Step-02: Substituting H = 2 in the recursive relation, we get- N(2) = N(2-1) + N(2-2) + 1 N(2) = N(1) + N(0) + 1 N(2) = 2 + 1 + 1 (Using base conditions) ∴ N(2) = 4 …………(2) Step-03: Using (2) in (1), we get- N(3) = 4 + 3 ∴ N(3) = 7 Thus, minimum number of nodes required to construct AVL tree of height-3 = 7. |

For Videos Join Our Youtube Channel: Join Now

For Videos Join Our Youtube Channel: Join Now

Feedback

- Send your Feedback to [email protected]

Help Others, Please Share